NEWS, EDITORIALS, REFERENCE

Implementing Factorial in 6502

Programming in 6502 has been an interesting adventure for me. I dabbled with it back 8 or 10 years ago. But I ultimately settled into coding most of my C64 projects in C. C felt sufficiently low level for my tastes. It was reasonably fast, much much faster than BASIC for example, there feels like a close 1-to-1 relationship between what you type and what eventually comes out the far end of the compiler. And it was still quite a learning curve before I was able to do much of anything useful.

Now that I'm not targeting the SuperCPU, even C feels bloated. The 6502 was just not designed for the sorts of contortions that C tries to put it through. For example, every time a C function is called the arguments are put onto the stack. The C function itself then manipulates those values on the stack as its working local memory space. This is really great for recursion or pre-emptive multitasking, but it pains me to think of what the 6502 must be doing to accomplish the stack referencing.

Once I'd decided that the stock C64 is what I will target for this particular project, I became fully committed to learning to do everything I need to do in 6502 assembly. At first it was a pretty big shock. And to be frank, it's often still shocking. Without the KERNAL and BASIC roms a C64 has almost nothing there upon which to write your code. You are literally writing against the registers of the I/O chips directly, and the only resources at your disposal to get anything done are the 6502/6510's 56 simple instructions. That sounds crazy. Well, how crazy is it? I wanted to try my hand at something both simple and complicated at the same time. Somehow I decided on writing a routine to compute factorial. How hard could that be? #FLW

Implementing Factorial in Javascript

In case you don't remember high school math, I'll remind you what factorial is. It's the product of all the numbers from a given number multiplied together counting down to 1. So, for example, 5 factorial (written "5!") would be 5 x 4 x 3 x 2 x 1 = 120. Writing an algorithm to compute the factorial of an arbitrary input is pretty simple, so I decided first to write it in Javascript. Since I'm a javascript programmer at work every day. It took me about a minute, maybe less, to see how it would work. I started with a recursive solution since that seemed the most elegant.

That's it! Pretty basic. If the input is 1, return 1. But if the input is more than 1 then return the product of this times the factorial of one less than this. Recursion as we know can break our minds, but it is certainly elegant if nothing else. The whole function is only 5 lines, and very short lines at that. But more, the properties of recursion feel like they best capture the essence of factorial as a mathematical concept.

The problem with recursion is that it takes up stack space for each iteration. When I run this in the javascript console of Safari 10 on macOS, it craps out after fac(25368). If I try fac(25369), it gives a: RangeError: Maximum call stack exceeded. However, this isn't the worst or the only problem. Because long before the stack is exceeded the result of the algorithm falls into Infinity. Infinity is what Javascript returns when a number is bigger than the biggest number it is capable of handling.1 It hits this after just fac(170), which it returns as: 7.257415615307994e+306, a very big number to be sure. fac(171) returns as Infinity.

The second problem, hitting the maximum handlable number, essentially crops up because factorial grows really really fast. On the other hand, many algorithms won't grow nearly so fast but still need lots of iterations to get the answer. You certainly don't want to crap out after just 25368 iterations because of a stack overflow. So my next stab at writing this was to use an iterative loop that involves no recursion.

Writing this version of factorial took me just one more minute, approximately. It's not much longer, only longer by one line. It feels slightly less elegant to my eyes, but it is undoubtedly significantly more efficient. When I try to find the maximum number of times it can iterate before crapping out... I'm unable to find that number. What happens is that the computer just starts taking longer and longer to compute for larger and larger input numbers. But they all eventually finish without hitting weird limits like the stack overflowing. Of course, for the last however many iterations they aren't doing much besides computing Infinity * nextNumber.

It's fair to say that writing a little function that'll compute factorial for you, in Javascript, is stunningly simple. If you're a programmer who already knows how to write some code, there's nothing to it. It's also fair to say, as you'll see, that getting the equivalent results out of 6502 ASM was much much harder.

Implementing Factorial in BASIC 2.0

But let's be even more fair. The Commodore 64 as a commercial product isn't just a 6510 processor on a bus hooked up to some RAM and I/O chips. It is that, but it also includes the KERNAL rom and, more importantly for the purposes of writing a factorial algorithm, the BASIC rom. BASIC was included with the computer precisely because it made writing programs and doing some fairly sophisticated math relatively easy and accessible to the average person.

BASIC, at its core, of course is just a bunch of 6502 code. About 8K of it in fact, permanently etched into a rom. So, anything we could do in BASIC is in a very real way something that can be done with 6502 assembly. But to get there from scratch first you'd have to write BASIC, or significant parts of it. This is not what we're going to be doing in our own 6502 implementation. But, first, let's look at how one could write factorial in BASIC.

This also only took about a minute. It might have even taken less time than the Javascript version because I'd already primed my brain to know exactly what to do. Note, that I didn't even attempt to do the recursive version in BASIC, that didn't make any sense, so I just skipped directly to replicating the iterative loop version. And it works very well.

I played around a bit to find the maximum number which BASIC could handle. It is quite small compared to what Javascript can handle. Turns out to be just 33. It spits out the result of 33! as 8.68331762e+36. As soon as I try to compute 34! I get an overflow error on the line that does the multiplication. That's okay though. Any number in scientific notation that goes to the power of 36 is a pretty big number. Most regular people doing regular math on their home computer aren't going to need numbers that big.

I wanted to check of the accuracy of BASIC though, just to be sure. And what I noticed is that it's accurate but a bit less precise. BASIC gives us 8 decimal places of precision and correctly rounds the final digit. Whereas the javascript algorithm gives us 14 decimals of precision. This can be attributed entirely to the underlying floating point number implementation of both environments. I know nothing about javascript's implementation, or its relationship to the floating point features of the more advanced CPUs it's running on. But I've done a bit of reading in The Advanced Machine Language Book For The Commodore-64 by Abacus Software2 on how floating point is implemented in BASIC on the C64.

Very briefly, a number in BASIC is stored as 5 bytes. 4 bytes are for precision, and store the most significant 32-bits worth of the number. The 5th byte is signed, and represents the offset of the decimal. By convention, within the 4 bytes the decimal is always presumed to fall after the first decimal number, and the 5th byte is adjusted to normalize any value that exceeds 9. So for example, 8 is stored as "8.000000..." with a decimal offset of 0. But 12 is stored as "1.200000..." with a decimal offset of 1. (I'm goin' from memory here, don't flay me if I'm a bit off in the precise details. Instead, leave a comment below!)

This is the reason why BASIC's precision stops after 8 decimal places. Because "868331762" (bearing in mind that by convention the decimal falls immediately after the leftmost 8,) when converted to hex is $33 C1 B0 F2, and that my friends is exactly 4 bytes. Cool stuff.

Implementing Factorial in 6502 Assembly

There is nothing wrong with writing some math algorithm in BASIC. If you were in high school and you had a Commodore 64 and you wanted to use it to help you with your homework, as I used to do, writing a quick little program in BASIC is absolutely ideal. Often much better and much more expressive than a mere calculator. That is the reason it was included with the machine in the first place. Because it adds tremendous utility to a home computer. It turns a bunch of chips into a powerful programmable computational tool. Nothing wrong with that!

However, what I really wanted to know is, what is the minimum amount of code necessary to write an algorithm like factorial, on the bare and essential features of the 6502 CPU. That's a very different question. If all I wanted to do was get the answer, and all I had was a C64, I'd use BASIC. But in 6502, where do we even begin? Well, let me show you.

The essential requirements of factorial are:

- The ability to loop

- The ability to decrement a counter

- The ability to multiply two numbers together, and

- The ability to determine that the answer has been arrived at and that the loop should end.

That's enough to compute factorial. But there is one other step that I consider at least tangentially necessary to complete the picture. You need to be able to see the answer, and so even though human readable output is not strictly part of the computation of factorial, it is close enough that I have to include it. Otherwise, there would be no way of knowing if the answer it arrived at is even right.

Fortunately, looping, decrementing a counter, and comparing to see if a counter has reached 1 and leaving the loop are all standard fare. Those things are super easy on a 6502. But what about multiplying two numbers together? Oops, as it turns out, there are no instructions that support multiplication directly. Not even two 8-bit inputs with an 8-bit output. Nothing. In order to do multiplication of any kind3 it is necessary to dig deep and figure out how multiplication fundamentally works and its relationship to addition and symbol shunting, such as bit shifting and rolling.

Multiplication

Whenever you plan to sit down and write an algorithm (or use someone else's hard work), you have to decide what precision and range you want to get out of it. In BASIC, that decision is effectively made for you, and the choice was made to use 32 bits of precision because that will handle a heck of a lot of normal use cases. One of the powers of writing the algorithm directly in 6502 is that if you wanted 64 or 128 bits of precision, you could do that. It puts the computer through more gyrations, but the possibility is there to do it. It is not possible to get more then 32 bits of precision out of BASIC.

It is safe to say that the multiplication routines for smaller bit widths is simpler than those for larger bit widths. And so two 8-bit inputs and an 8-bit result are definitely the simplest, but the range is so cripplingly limited as to be almost useless. 6! is 720, that's outside the range of an 8-bit result. The range of 16-bit doesn't take us much further. 9! is outside the range of 16-bit. However, as this is an experiment, I want to show how an 8-bit CPU can be used to do math beyond just 8-bits, but without unnecessarily bogging us down with the complexity of going to 32 or 64 bits. But rest assured, the same way the CPU has what it takes (namely thanks to the carry) to be able to extend math operations beyond 8-bit, the principle can be applied to extend to much larger bit widths too.

So here we have a 16-bit multiplication subroutine. It takes a 16-bit multiplier and a 16-bit multiplicand, and puts the product of the two in a 16-bit output. Note of course that there are lots and lots of combinations of two 16-bit inputs that will overflow a 16-bit output. The really safe way to deal with this problem is to use multiplication algorithms that have an output twice the width of the inputs. 16-bit by 16-bit input and a 32-bit output, which this routine doesn't do so bear that in mind.

Next, I did not write this routine. Sadly, it would have been beyond my ability. Instead I pulled it from this forum post on forum.6502.org. I modified it slightly to preserve the original inputs, and to improve clarity (in my personal judgement of what is clear.) There are other great examples of math algorithms with different levels of precision at Codebase64.

I don't want, nor am I well suited, to get into the mathematical details of how this works. I can say with some certainty though, that a multiplication can be acheived as successive additions. For example, if you have 13 times 5, you can initialize a running total as zero, and then in a loop add one of those two arguments to the total, decrement the other argument, and if it's not zero run the loop again. Thus adding 5 to a running total in a loop that loops 13 times. Or, the inverse, add 13 to a running total in a loop that loops 5 times over. There is indeed an addition stage in the algorithm above starting at the CLC inside the loop and ending just before the "skip" label. However, people far smarter than I am discovered a long time ago that there are certain efficiencies to be gained by doing the additions in other ways than merely doing the same addition some large number of times.

If you are multiplying two 16-bit numbers, the naïve way might mean you have to add 572 to the running total 65,000 times! Whereas, in the example above, the loop explicitly only loops 16 times, which is not coincidentally the number of bits in the output. Somehow by rolling the bits in the output, and rolling the bits in one of the arguments, it can be determined whether an addition need take place. Repeat 16 times and the correct answer magically appears. If you're into mathematical theory, you'd probably really enjoy analyzing this routine to death. For me, it is enough to know that it works, and it consists of 30 instructions. Or, 22 instructions if you don't care about corrupting one of the inputs in the process.

There is just one other thing I'd like to note about this routine. The inputs and the output are hardcoded references to what amount to global variables. The use of global variables is typically considered very bad form by modern computing standards. But it seems hard to avoid on the 6502. As it happens these global variables are in zero page, that was done so that all the access to them will be as fast as possible. But, I did test it and everything works just fine when they are not in zero page. Therefore, the product could be computed in a local variable, and could even be returned in the .X and .Y registers. However, these are not big concerns when literally the entire machine is at our disposal for just the one solitary purpose of computing factorial.

Factorial core routine

Now we can get to the core routine of factorial. Remember that the workspace variables for the multiplication have already been defined.

Comparing this to the Javascript function, or the BASIC program, they are actually quite similar. Before the "next" label we are simply initializing the factor. This is the number for which we want to get the factorial. Because a 16-bit output can only support factorial up to 8 (remember 9! is over 350,000) we really only need an 8-bit initial factor. This gets loaded into the low byte of the multiplier, and the high byte of the multiplier is set to 0. So if the factor is 6, the multiplier becomes $0006.

We enter the top of the loop and decrement factor. If it is now zero we leave the loop by branching to done. If not, we load the newly decremented factor into the multiplicand and JSR to mult16. Thus 6 x 5 ends up in product. Next we transfer product to the multiplier and loop back to the top. On the next iteration multiplier is prepopulated by with the result of 6x5 (30) and multiplicand is populated by the next decremented factor 4. Thus 30 x 4 ends up in product, and so on. Finally feels like we're making some progress.

Human readable output

That's all well and good, but how do we know if product contains the right answer unless we can see the output? The final product is just two bytes. But the problem is that those two bytes cannot simply be shoved onto the screen because they will represent some odd C64 Screencodes and we won't be able to interpret them as numbers. What we really want is to convert them to some base of numbers we can read.

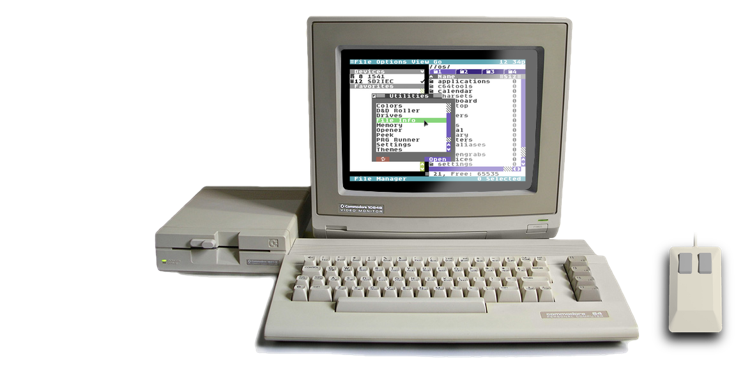

Number conversions to and from decimal is very cool, and it's something I'd like to get into in a future post. But conversion from binary to hexadecimal is significantly more straight forward. One byte of binary input always converts into exactly 2 bytes of hexadecimal output. Here's a routine that I keep handy in my own coding repertoire while I'm working on C64 OS. I usually put it in an include file so that I can bring it into my source with a .include "inttohex.s" directive whenever I need it. It came out of a discussion with someone on #c64friends on IRC.

This is a clever little routine. The hexits label is effectively a conversion table. The contents of the string are bytes that the assembler encodes in PETSCII. But the offset into the table is the conversion to the PETSCII representation of that value. So if you had the actual integer 7, and you index 7 bytes into hexits, you arrive at the PETSCII value "7" (which is actually the integer 55, but we never need to know that.) Perfect.

At the calling label "inttohex" you see the documentation style that I've grown accustomed to. It shows the register and a right-pointing arrow to mean input, followed by a description of what it should hold. The registers that are outputs are followed by a left-pointing arrow and then a description of them. So, put an 8-bit integer in the accumulator and JSR inttohex. You get back two PETSCII characters in X and Y, lo and hi nybbles respectively.

To do that we push the accumlator onto the stack to back it up, then shift right 4 times. That takes the hi nybble (4-bits) and shifts it into the lower nybble. The upper nybble is replaced by zeros. Transfer that to the X register to be used as an index into hexits. Grab the hi nybble PETSCII character and stash it in a temp variable. Restore the original accumulator from the stack and using AND zero out the upper nybble leaving only the lower nybble intact. Transfer that to the X register and use it to index into hexits to grab the PETSCII value for the lower nybble. Lastly, stick the low nybble in X, read the high nybble from the temp variable directly into the Y register and return. Bingo bango.

That's a fine way to convert one byte into two PETSCII characters that are an easily readable hexadecimal output. But how do we physically output them? Well, we could try shoving them into screen memory, and that would work. But I'm pretty comfortable at this stage using the KERNAL routines designed to output characters to the screen. In this case, CHROUT ($ffd2).

There are however, two bytes in a 16-bit output. And although the CPU is little endian and wants the lo byte first followed by the hi byte, that's not the order we want to see them in. To make things easy and clear, I wrote a little macro "out16." You pass it the address of the low byte, and it converts the hi byte (low byte + 1) to PETSCII and chrout's the result, hi nybble lo nybble. Then it calls inttohex on the lo byte, and chrout's its result, hi nybble lo nybble. And finally the macro outputs a carriage return so multiple calls to out16 will put the results on separate lines.

Putting it all together

It took a long time, and a lot of effort, but we've got pretty much all the parts together we need to make a complete and running program. Here's what the final source code, altogether, in TurboMacroProX format looks like, including comments, section separators and a little something extra I'll talk about in a moment.

This whole thing took me hours to put together, mostly because of a few gotchas I'm going to describe now. It's safe to say it took a lot less code and a lot less time to write that little 5 line Javascript function from the first examples. But, it's also remarkable how easy it was to do the same thing in BASIC. The power and simplicity of a high level language certainly seems worth the relative slowness of its execution. If what you needed could be accomplished in BASIC you'd be masochistic to do it in ASM. Not to mention the fact that adding user input to the BASIC program would be trivial, and its output is in decimal. Getting user input in ASM would be a whole new ordeal, and its output is readable but it's in nerdy hexadecimal rather than the much more common and user-friendly decimal.

I like to structure my code such that constants appear at the top, followed by the main code body, followed by subroutines that will be used and called by the main code body. I nearly tore my hair out trying to debug the meaning of "label not defined" on the calls to out16. Eventually I realized that the assembler wants macro definitions to appear in the code before anything attempts to use them. I'm not entirely sure why. So, I moved the out16 macro definition to appear just below the constants.

Next, you have to declare where in memory you want your code to assemble to. I chose $0801 because that's where BASIC programs are loaded into, and because it's an easy place that I know how to jump to with a SYS command. This decision may have been the most hair pulling of this experiment. As it happens, when you double click a .PRG file to open it in VICE (which is what I was testing in,) it not only loads it, but it also runs it automatically, by issuing the RUN command. I totally get why it does this. It's so that if you're using VICE with a one file game you can just double click it, VICE will launch, load and run the program for you, and boom you're playing a C64 game in an emulator and you didn't have to know even a single arcane C64 "command."

The problem with RUN is that it attempts to execute my code as though it were a BASIC program, which it's not. The result of this is that BASIC was actually corrupting my code before I had a chance to run it. So, what I would do is double click the file, wait for BASIC to mess it up and return to the ready prompt and then I'd issue an SYS2049 after that. It crashed immediately every time. This was very frustrating until I figured out what was happening.

The secret is to include a tiny prelude to your code, that is itself in fact a valid BASIC program that when run will jump to your real code. This trick is used all the time in C64 games and demos. I didn't have what that prelude would be handy but it's surprisingly easy to figure out. I simply wrote a basic program: 10 SYS2049 Then I saved that to "disk" which in VICE put the .PRG basic program back onto my Mac's desktop.

Opening that file with the excellent Mac utility, Hex Fiend, revealed the following BASIC program bytes:

01 08 0B 08 0A 00 9E 32 30 34 39 00 00 00

The first two bytes are the load address, 01 08, $0801, so we can ignore them, they won't end up in memory. The next 2 bytes are a pointer to the start of the next BASIC line, 0B 08, ($080B). The next two bytes are the 16-bit program line number, 0A 00, ($000A, or 10) and that 9E must be the token for SYS. The next four bytes, 32 30 34 39, reveal very clearly the 2 0 4 9 conveniently visible in the lower nybble of each hex value. And the last 3 bytes 00 00 00 are the basic program terminating sequence. In other words, the basic program will occupy (2 + 2 + 1 + 4 + 3) 12 bytes in memory. Which means if the BASIC prelude starts at $0801 (2049), then my ASM code will start at 2049 + 12 or 2061. Thus we can just change the BASIC prelude so the SYS will go to 2061 instead of 2049 and we get a BASIC prelude like this:

0B 08 0A 00 9E 32 30 36 31 00 00 00

And so that is exactly what we put into the assembler as a sequence of bytes that immediately precedes the start of the main body code. And it works perfectly.

*=$0801 ;Basic Prelude, 10 SYS 2061 .byte $0B,$08,$0A,$00,$9E,$32,$30,$36,$31,$00,$00,$00

And there we have it running in VICE. I added one more #out16 to the body code so we could see the output of the multiplier before beginning to compute its factorial, just so we can see in the screenshot the two values. The first output is 0008 (8 in decimal), and the result is printed as 9D80 (40320 in decimal), and that is the correct answer! Hurray!

Download the PRG here

You can run that in VICE, or on a real C64 and see that it in fact works. Or analyze it with a Hex Editor. Note that it's hardcoded to compute 8!.

I'll be the first to admit that it took a lot of work, and a lot of effort, and the result is less capable than what I got with BASIC, but it feels good. It feels like we're at rock bottom. There is no code running that we are not explicitly aware of (with the exception of those final chrouts.) It's like painting a picture by applying every single stroke of paint to the canvas by hand.

The other thing I should say before signing off, is that in practice it doesn't feel quite this difficult when writing ASM code for C64 OS. First of all, I have yet to need multiplication even once. And second, after you've been working on your project for a while all the surrounding structure such as where to put constants and macros, what address to load your code to, and even output, become solved problems that you stop thinking about on a day to day basis.

That's it for this post. I consider that worthy of the Technical Deep Dive category.

- Actually Javascript is bit wonky around big numbers. Number.MAX_VALUE returns 1.7976931348623157e+308. If you check the equality of Number.MAX_VALUE == Infinity it returns false. However, if you check the equality of Number.MAX_VALUE == Number.MAX_VALUE + 1 it returns true! That certainly feels like a behaviour unique to Infinity. [↩]

- I'd like to point out just how incredibly awesome it is that these classic books are available for free at Archive.org. I read the Geos Programmers Reference Guide cover to cover on Archive.org. Although, I have Advanced Machine Language in paperback, and do prefer a paper copy in most cases, sometimes it's nearly impossible to get your hands on a physical copy. [↩]

- Actually, there is one kind of multiplication, and division, that can be done with the built in instructions. Multiplying by two can be accomplished by shifting all the bits left one position. And dividing by two can be done by shifting all the bits right on position. Using the carry shifts can be done on higher bytes that shift in a bit that fell of the edge of a lower byte, thus enabling shifts that are 16-bit, 32-bit, 64-bit and more. [↩]

Do you like what you see?

You've just read one of my high-quality, long-form, weblog posts, for free! First, thank you for your interest, it makes producing this content feel worthwhile. I love to hear your input and feedback in the forums below. And I do my best to answer every question.

I'm creating C64 OS and documenting my progress along the way, to give something to you and contribute to the Commodore community. Please consider purchasing one of the items I am currently offering or making a small donation, to help me continue to bring you updates, in-depth technical discussions and programming reference. Your generous support is greatly appreciated.

Greg Naçu — C64OS.com