NEWS, EDITORIALS, REFERENCE

Command and Control

In the last month of so, I've been working on Utilities, how they are loaded and how they are quit. How they load and save their configuration data, and how they efficiently redraw. I want eagerly to write in detail about Utilities and get into the details of how everything comes together around them. However, before I do that, I've got to take a bit of time first to document my plans and work on something a bit more low level. I've been thinking about what exactly to call this lower level system, and I've decide to call it Command and Control.

Command and Control is the flow of execution, the order of event processing, and the execution of processes and timers. I've discussed in other posts already many of the elements that will be covered in this post, but this post will bring them together into a more coherent picture. Also I've done more of the programming legwork since the time when I was just planning. Some ideas have gone by the wayside and others, I've never mentioned before, have crept in. So, no need to go any more meta than that, let's just talk about the details.

Interrupt Service Routine and Events

Whatever the CPU happens to be working on, the details of which we'll get into below, the IRQ is triggered by CIA1 60 times a second. This is the standard time, the KERNAL also configures the system this way at start up. The main system timing is therefore built around 1/60th of a second.

The interrupt service routine makes use of I/O and the KERNAL, and so it is essential that these are mapped in whenever it is time to service the IRQ. When the CPU is interrupted, it hops through an IRQ vector which it finds at the end of memory, $FFFE and $FFFF.1 You don't have much control over the state of the machine when an IRQ goes off. But you do have some. Initially, if I ever needed to patch out the KERNAL I would be very meticulous about calling SEI (SEt Interrupt mask bit), so that IRQs would be ignored. And then after patching the KERNAL back in calling CLI (CLear Interrupt mask bit). Unfortunately, this is not without its issues.

From, Compute!'s Mapping the Commodore 64 and C64c, pg.245

It is the Interrupt Service Routine that reads and updates the position of the mouse cursor. If you disable interrupts for too long, the consequence is that the mouse becomes jerky and unresponsive. It is really a subpar experience.

Now, if the KERNAL is patched in when the IRQ goes off (and it's not masked), the code that is in the KERNAL rom itself occupies the memory where the vector is found. The KERNAL therefore provides a vector to itself. It points to $FF48, which is also in the KERNAL. That routine does some minor preparatory work to backup the registers, (it also determines if it was a genuine interrupt or if it was a BRK instruction, because they both cause the CPU to jump through this same vector,) and then jumps through a vector stored in RAM at $0314/$0315 (or $0316/$0317 if it was a BRK instruction).

At boot up the KERNAL copies a table of vectors (hardcoded in the KERNAL rom) into workspace ram ($0000—$03FF). Thus, at boot up the KERNAL plugs values into $0314/$0315 that point back to a more general interrupt service routine elsewhere in the KERNAL. When interrupted, flow goes first through the vector at $FFFE/$FFFF to the preparatory routine, then this jumps through $0314/$0315 to the general service routine.

Among some other tasks, (updating the jiffy clock, blinking the cursor, etc.) the general service routine scans the keyboard and potentially buffers a PETSCII character, and then it ends. At boot up a C64 absolutely depends on having the KERNAL patched in. The lower 3 bits of address $0001 (the 6510's processor port) are wired to the PLA to determine which combination of BASIC/KERNAL/IO/CHARSET and RAM should be mapped into address space. If you POKE1,53 shortly after starting your C64, it will freeze solid. Doing so patches out the KERNAL, but without the KERNAL the cursor can't blink, the keyboard can't be scanned, everything basically comes to a grinding halt.

The thing is though, there is 8K of ram hidden beneath the KERNAL rom. C64 OS wants to make use it, but, all of the above considered, it's hard to use properly.

A great trick I learned from JBevren (David Wood) on IRC, is to configure two different base IRQ handlers. When the KERNAL rom is not patched in, the CPU sees ram at $FFFE/$FFFF. Therefore, into this ram you can put a pointer to your own custom routine that will patch the KERNAL in, then call your general IRQ service routine and at the end, patch the KERNAL back out before the RTI (ReTurn from Interrupt) so ram beneath the KERNAL becomes visible again. This is how C64 OS is configured, and it works beautifully. Here's how I implemented that:

Initirq is run by the booter. It puts a pointer to romirq into $0314/$0315, and a pointer to ramirq into $FFFE/$FFFF. Romirq will be run when the KERNAL is patched in, which does its own prep work and BRK check. It merely jumps to c64os_service, which I haven't shown here. And after that it jmps to endirq, which restores the registers and does the RTI.

Ramirq, though, will be jumped to directly by the CPU when the KERNAL is not patched in. It therefore does the prep work of backing up A, X and Y. Then it reads the processor port, masks away all but the low three bits, and writes it into the immediate argument of the ORA instruction below. Then it calls a C64 OS routine seeioker, which patches in I/O and the KERNAL (but not BASIC.)

Next, it does the BRK check, just as the KERNAL does. If it's a break, it JMP()'s through the KERNAL's BRK vector, and everything probably crashes at that point. I haven't figured out how to gracefully handle this situation yet. It should probably crash the running app, and return the user to the current Homebase.

Otherwise, it JSR's directly to c64os_service, to perform the real work. It cannot JMP($0314), though. Because, if it did, it would miss the extra step of restoring the processor port at the end. By calling c64os_service directly, it returns to the small chunk of code just before endirq. This restores the lower 3 bits of the processor port by using the self-modded immediate argument written to the ORA earlier. Then it falls through to endirq, which restores the other registers and does the RTI. It works a charm.

In C64 OS, regardless of whether the KERNAL and I/O are mapped in or out, except for under rare and special circumstances, you do not need to mask interrupts. When an IRQ occurs, the OS ensures that the KERNAL and I/O are automatically mapped back in, just long enough to run the service routine, and then restores the mapping to its previous state. The c64os_service routine does the following:

- The mouse cursor position is updated

- Mouse buttons are scanned

- Mouse events are generated

- The keyboard is scanned

- Keyboard command events are generated

- Printable key events are generated

There is also the jiffy clock and logic for cursor blinking, but we'll ignore those for now.

The mouse buttons are scanned first. They can be detected by disabling all of CIA1's row scanning port lines. This means, nothing pressed on the keyboard can drag low the column port lines.2 However, if a column port line is still pulled low, that's because the mouse buttons are being pressed. Because the mouse and keyboard share the same CIA port lines, only limited parts of the keyboard can be accurately read while mouse buttons are held down. This is the reason mouse buttons are scanned first. Now, if mouse buttons are down, fortunately, the CONTROL, COMMODORE, and LEFT-SHIFT keys can still be scanned on the keyboard without interference. Therefore mouse events can be constructed that include a set of bitflags for each of these three keyboard modifiers. Up to 3 Mouse events can be queued.

If the mouse buttons are not held down, then the entire keyboard can be scanned. It is scanned for keyboard command events, these are any keys pressed in combination with either the Control or Commodore keys. Key command events are buffered separately from mouse events, and up to 3 of these can be queued at the same time. Lastly, if no modifiers were held down, any other pressed keys can get converted in printable key events, which are very much like the KERNAL's own key scanning routine. C64 OS can queue up to 10 PETSCII characters into the same keyboard buffer the KERNAL uses.

This post, The Event Model, goes into much more detail on everything that is summarized above. And even though it's from a year and a half ago, not much of it, if anything, has changed.

Layers (Screen Layers)

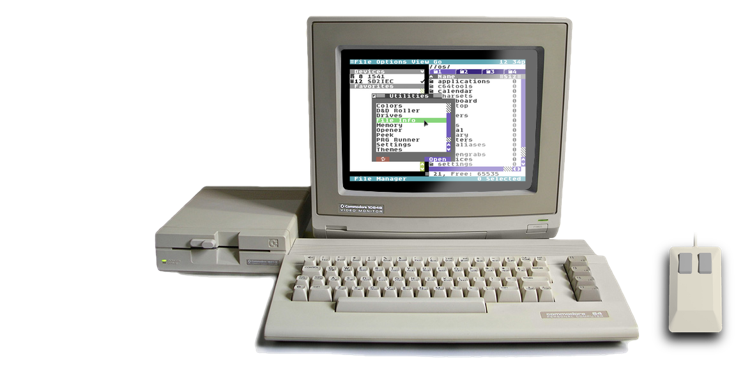

C64 OS is single tasking, not multitasking. However, execution is not all owned by and controlled from a single all seeing perspective, the way a game or a truly single-purpose program is.

A traditional piece of C64 software, a program you'd load and run, is truly singular with complete ownership of the machine and all of its resources. Take some simple examples, like the file copier that CMD shipped with its Harddrives and Floppy Drives, FCOPY. FCOPY owns the whole screen, it draws to the screen where it wants and when it wants. It owns the CIAs, and the IEC bus. It owns the keyboard, and it implements a simple fixed single-key-press menu system. A lot of C64 software works like this.

FCOPY, ©1993 CMD.

On the other end of the spectrum, of computing environments, you have pre-emptive multitasking, with advanced timeslicing kernels. I've argued at length, but sprinkled throughout my writing, that while a pre-emptively multitasking kernel is possible on the 6510, the architecture of the CPU was truly not designed for this, and the result is something slow and inefficient, without a whole lot of upside.

Somewhere in the middle run the gamut from how GEOS works (which is on the primitive side), to how C64 OS works (which has a limited form of cooperative multitasking), to what the classic Mac OS had (which was essentially the pinnacle of cooperative multitasking).

C64 OS has a set of stacked layers prioritized by their index. The higher in the stack, the more priority a layer has over the layers below it. Plus the system's own Interrupt Service Routine, discussed above, which has the highest priorty, because, well, it interrupts everything else. C64 OS, supports 4 layers (Indexes: 0 through 3). The system, at boot time, installs the menu system into Layer 3, the topmost layer, giving it first priority.

The main application that is loaded and run, begins by pushing a single layer structure onto the stack. Pushes start at 0, then 1 then 2. And are popped in that order. The menu system remains fixed at layer 3 regardless of what else gets pushed and popped below it. A layer consists of an 8-byte structure, four 2-byte vectors. The vectors are to:

- A draw routine

- A mouse event handler

- A key command event handler

- A printable key event handler

A layer is intimately related to the event loop, and to the event model. These vectors must be set to something valid (not to $0000), but they can be set to the system defined nullvector which simply points at an RTS. This can be used if the layer wants to be transparent to one of these low-level event types.

Here's a small sample of code, from the initialization of a sample app. You load a pointer to the screen layer struct into .X and .Y (I refer to this as a RegPtr, .X low, .Y high, and have a set of macros for loading and storing them: #ldxy, #rdxy, #stxy). Then you call layerpush to push the screen layer struct onto the stack. The app's initialization code should call markredraw, as well.

Event Distribution (Event Loop)

The event loop in the C64's KERNAL rom consists of a loop that is, believe it or not, just 4 instructions long.

It loads the keyboard buffer index. It stores it into two places in workspace memory as flags that affect cursor blinking and screen scrolling. Then, if it's zero, it branches back to read the keyboard buffer index again. And it does this forever, until the interrupt service routine, which is continually scanning the keyboard, puts a PETSCII character in the buffer. At that point, this very simple event loop notices that the buffer index is no longer zero, and proceeds to process the character. Under normal circumstances, however, it quickly settles back into its 4-instruction loop waiting for another key to be pressed.

C64 OS is definitely more advanced, but not extraordinarily more complex.

In C64 OS, the event loop first checks the mouse events queue for the presence of a mouse event. If there are none, it checks the key command event queue for the presence of a key command event. If there aren't any, it checks the printable key event buffer for a PETSCII charcter. If there are none of those, then before it loops it makes a call into the timer module to give registered timers an opportunity to fire if any of them have expired. Then it checks the redraw flags, and if any of those are set it goes into a redraw cycle. After that, it loops back up to the top to check for more mouse events.

Let's suppose that the user presses the primary mouse button down. The interrupt service routine notices this and enqueues a mousedown event at the coordinates where the mouse cursor is. The main event loop very soon after notices that there is a mouse event in the queue. To handle the mouse event, it loops over the layers, starting at the highest index, and jumps through that layer's mouse event handling vector.

That layer's routine is now in control. The event is never passed around, it is simply left at the start of its queue. Routines that want to access it can make a call to the input module to get a copy of the current event. The layer in control can do anything it wants with the event. It is free to be as low level as it wants, or to call into some higher level code, such as the C64 OS ToolKit module, to use the event. The layer can even manipulate the event, changing its type, flags or coordinates. The effect of the event may lead to a change in the data model(s) managed by whatever code pushed the layer, and if the result of a data model change is that the visual appearence of this layer has changed, then the code makes a system call to mark the layer for needing a redraw. Then it returns.

Simple Event Loop Flowchart

When the mouse event handler of a layer returns, it uses the Carry bit to signal whether the event should propagate to a lower layer or not. If the handler returns with the carry clear, then the next layer down the stack will have its mouse event handler routine called. This routine has no way of knowing that a higher layer may have modified the event before it got a shot at handling it. It simply handles it just as the higher layer had an opportunity to handle it.

With each layer getting a shot at handling the event, in order, eventually when there are no more layers to go through, or when a layer ends propagation, that event has been dealt with. The main event loop itself shifts that event off the queue and discards it automatically.

Next, the event loop goes on to check for the presence of keyboard command events. If there is a keyboard command event, then the order of execution happens again. The topmost layer has its key command event handler called. And so on.

Here is a block diagram I put together of the general flow of data. Some parts of the diagram we'll cover below. The green boxes are physical hardware. The blue boxes are chips in the C64. The yellow boxes are software that is part of C64 OS itself. The orange boxes are software that is part of the application and utility code which gets loaded and unloaded at runtime by the user.

C64 OS Command and Control Flow Block Diagram

Menu Layer

As mentioned above, the system, at boot time, installs the menu layer at the top of the layer stack. At the time of this writing, the menu layer does not implement a printable key event handler. So, any single key that is pressed automatically by-passes the menu layer and is delivered to the next layer down.

When a mouse event occurs, though, the menu layer always has the first shot at handling it. It uses its own internal state and logic to determine if the event is interacting with the menus. For example, if no menu is currently open, and a mouse down event occurs, the menu layer uses the coordinates of the event to know if the mouse was over the menu bar when the button was pressed. If it was, then the menu system changes its own state to become aware that the user is interacting with it. Subsequent tracking events are trapped by the menu layer, processed and not allowed to propagate to lower layers.

I've written quite a bit about the menu system, so if you're interested you can read more here:

Interaction with the menu system comes to an end when it gets the mouse up event. Each menu item has, at a minimum, a label and a 1-byte action code. If the user's interactions with the menu caused him or her to trigger a menu item, then the action code is sent immediately to the application. More on this in a moment.

In addition to observing and handling mouse events, the menu layer has a handler for key command events. And because it is the topmost layer, it always gets the first shot at handling any key command events. Menu items may optionally have a byte for modifier key flags and another byte for a shortcut key. Whenever a key command event is handled by the menu layer, the menu system recursively searches the hierarchy of nested menus looking for an item that matches the modifier key mask and shortcut value. If a match is found, the menu system immediately sends the action code to the application, via its dispatch table, just as though the mouse had been used to select the item. After which the menu system will return to the event loop with the Carry set. This prevents the key command event from propagating to lower layers.

Application Dispatch Table

Every application begins with a small dispatch table. The table consists of a set of vectors to routines found within the application, to handle its most critical functions:

- appinit (App is starting up)

- appquit (App is shutting down)

- appfrz (App is about to be frozen to REU)

- appthw (App has just been retrieved from REU)

- mnucmd (App is receiving an external command)

As soon as an application is loaded, the loader calls the appinit routine. This allows the app to do any start up stuff, such as load in configuration data, load in custom resources or setup a timer. It is the appinit routine that is responsible for pushing the application's primary layer onto the layer stack. If it doesn't do this, then it will never receive event calls and will have no opportunity to draw itself.

The appquit routine is called automatically when the user attempts to load a different app. This gives the current application an opportunity to write its current configuration to disk, to save and close any currently open files, to free any allocated memory, to close network connections, to stop playing music, whatever it needs to do to clean itself up.

At the time of this writing, the app freeze and app thaw routines are reserved for future use. They're there to start laying the groundwork for apps to support being frozen and copied into an REU, for fast app switching. And the reverse, copying them out of an REU and back into main ram and then thawed back into life. As I develop this further, I'm sure I'll write a post about it.

Lastly, the mnucmd vector is called by the menu system when a menu item is triggered. Mnucmd handles the action codes that come from the menu system. Originally, receiving commands from the menu system was the only reason for this, which is why it's called mnucmd. However, I'm extending this to be a more generic entry point for external messaging. For example, the Color Picker Utility sends a message to the app via this vector about the picked color.

This can be a bit tricky to see at first, but the menu command handler is not part of the event distribution system by the main event loop. The mouse and key command events that are distributed by the main event loop are sent to the topmost layer first, the menu layer. If they are used to trigger a menu item, then the menu system sends the action code directly to the application's mnucmd dispatch routine. And the menu layer prevents the events themselves from propagating to lower layers by returning with the Carry set.

If, however, the menu layer does not handle an event, the event itself propagates to a lower layer. The next layer could be owned by a Utility. If something in the Utility is triggered by the event, it could send a command to directly to the application. Depending on the situation it can stop propagation of the event or allow the event to keep going.

The next layer down may well be the layer that was pushed on by the application. Then the application's own code has the ability to handle a mouse event, or a key command event directly. So, keyboard command handling can be done by the application itself, via the key command event handler of one of its pushed layers. Or, it can handle the abstracted action code via its main dispatch table, which the menu system sends as a result of the menu system's layer handling key command events, and it finding a match in the configured menus.

Before I get into the details of Utilities, in a post soon to come, let me just say briefly that a Utility is effectively a separate program, assembled to and loaded in behind the KERNAL. The utility has its own dispatch table, with a different set of routines than applications. Utilities don't ever receive action codes directly from the menu system, for example. That wouldn't make sense, because the menu is configured by the application, with commands for the application, and the app has no idea if the user will load in a Utility or not, or what Utility it will be.

But, and here's the rub, a Utility that is loaded in concurrently with an app pushes its own layer onto the stack. And its layer is pushed above that of the main application. This gives the Utility event-priority over the application. So, while a Utility is open, a key command event that is not handled by the menu system, will be given to the Utility to handle, before being given to the app. You can see that in the diagram above.

Redrawing

The order of event handling makes even more sense for mouse events. Because, a layer is not just an abstractly prioritized event handling layer, it is also a compositing, drawing, layer. A Utility, for example, draws itself above the application. And the menu system draws itself above a Utility.

Unlike a simple singular program, such as the aforementioned FCOPY, each of these systems share the screen. The application cannot simply draw whenever it pleases, because it could draw over top of something that is meant to be layered above it, causing strange artifacts. It is also not efficient to draw immediately upon handling an event. The reason is because more than one event or event type may be handled within a single iteration of the event loop.

For example, a mouse event, and a printable key event, and a scheduled timer (more on these in a moment) could all be handled in a single iteration of the event loop. After each one, rather than beginning the process of drawing, the layer calls markredraw, to signal the system that its visual appearence is out of sync with its data models. Additionally, more than one layer could be affected by the same event. For instance, a Utility could handle a keyboard command, which could cause a visual change in itself requiring a redraw, but it could also allow the event to propagate down to the app's layer, which could handle it, and then also require a redraw. If the underlying app redraws, then the Utility has to redraw above that. So it makes no sense for the Utility to draw itself immediately upon handling the event, since it doesn't know that the app below it may force it to redraw again.

Instead, each layer's handler can call markredraw if it needs to redraw. Markredraw knows by context which layer is currently being processed, and sets flags for that layer. Additionally, a layer may specify if its redraw is dirty or clean. Imagine the case of a Utility layer, that is drawn above an application. The user clicks on the Utility and selects something, causing it to change color. The change is entirely inside the visual the bounds of the utility. The underlying application layer does not need to reconstruct any part of the screen. In this case, the utility would declare that it needs a clean redraw.

At the end of every main event loop, the redraw flags are analyzed, and the redraw routines are called on the minimum number of affected layers, starting at the bottom going up. In the above case, first the Utility would be asked to redraw. It would redraw itself inside its bounds. Next, because the utility could be partially obscured by the menu layer, the menu layer has its redraw routine called. Meanwhile, the application, with a large complex UI, does not have its layer's redraw routine called at all.

Now imagine a different scenario. The user clicks the top bar of a Utility panel and drags it across the screen. Not only does the Utility need to be drawn in a new place, but where it used to be is now open, and exposes a new part of the Application below. The Utility declares in this case that it needs a dirty redraw, and the application layer is required to redraw itself first.

In the least expensive situation, the topmost layer is calling for a clean redraw. This happens, for example, when a menu that is already open, is tracking the mouse and changing the which menu item is highlighted. Only the menu system needs to redraw itself. Additionally, a clean redraw can occur if a layer is enlarging the area that it covers. For example, when no old menu is closed, but a new menu is opened, that causes the menu system to cover more of the screen, but to uncover nothing that it was previously covering.

Timers

The above description covers the flow of how events are created, how layers are stacked, and how events are distributed to those layers. Including intermediate interaction between the menu system and the application's menu command handler. Then drawing is described such that it is not done unnecessarily, and not done more often than required.

However, there is one other way, besides user-generated events, that can cause an app's data models to change, requiring a redraw. Timers.

Just as the system knows what the current layer is when markredraw gets called, the current layer is also known whenever a timer is enqueued. The current layer index is written into the timer structure at the moment it is enqueued. It is the job of the interrupt service routine to call into the timer module to count down all queued timers. And it is the job of the main event loop to call into the timer module to check for any expired timers. This happens after the user-generated events are processed, but before any redraw flags are checked.

Any code called by the timer can also call markredraw. Prior to triggering an expired timer, the associated layer of the timer is set as the current layer. So the trigger code doesn't have to manually concern itself with which layer it is affecting. Subsequent drawing happens just as described above, except it has the added twist that layers may become marked for redraw even while the user is not directly interacting with them.

This is what allows for something I showed in a video of my comparison between GEOS and C64 OS menus. A menu command is used to tell the app to setup an animation timer on the application's underlying layer. Every 5th of a second the row offset of the background is shifted up and down, and then the timer calls markredraw.

Then the user opens the menu and starts zooming around on it, opening and closing menus. While the menu layer is being interacted with via mouse events, no events are being permitted to propagate down to the application. However, the timer is still setting the redraw flags for the bottommost layer five times a second. And the result of the layered compositing is the rather excellent effect that an animation is able to tick along in the background. Even when it is partially obscured by overlaying UI, like the menu or a Utility. Very cool.

Cooperation

Lastly, let's say a few more words about multitasking.

A real operating system has four major parts that handle the input/output, filesystems, memory management and process handling. At the very least, a "real" OS includes some form of multitasking :-) André Fachat, 1996, OS/A65

I actually disagree with that last statement. But, then, it is followed with a smiley, so maybe I should take it with a grain of salt. In my books, an operating system is a piece of code that is designed to stay resident, that is designed to provide services to loadable programs, to help them to use the hardware. Without an operating system, a computer is just a bunch of chips, wired together on a bus. In a C64, the combination of KERNAL and BASIC roms are the OS. They implement a lot, including a text-based user interface. But there is definitely no multitasking.

Mind you, with many games, the built-in operating system is only used to bootstrap part of the game into memory. After that, the game may entirely dispense with the OS, and implement absolutely everything on top of the bare metal. In such a case, once the game is running, there truly is no operating system.

An operating system (OS) is system software that manages computer hardware and software resources and provides common services for computer programs. Wikipedia, Operating System

I started this post by saying that C64 OS is not multitasking. And for the most part it isn't. But there are some provisions for doing a combination of simple cooperative multitasking and fast app switching.

In preemptive multitasking, the KERNAL, which controls the interrupt service routine, does not merely use the ISR to service the input devices and blink the cursor. It keeps tables of multiple programs that are currently loaded into different places in memory. After the interrupt, it doesn't merely return whence it came. It does some prioritization calculations and then does some stack pointer manipulation to change the execution return point. In effect, it returns to a different program to allow that program to resume executing. Each interrupt allows the KERNAL to forceably take back control from one program and give control to another.

This is of course what modern Operating Systems do, and it's totally great. It unleashes all the awesome power of modern CPUs. Preemptive multitasking is definitely possible on a C64, because it has been done by André Fachat, with OS/A65 and GeckOS, which you can read all about here. But I'm skeptical of how useful it really is, given the hardware constraints of the C64. And so I made a design decision right at the beginning that C64 OS would not be preemptively multitasking.

C64 OS has a layer system, and is event driven. It allows one main application to be loaded into the main memory area, from which memory allocations are also drawn. Plus it allows one Utility to load in behind the KERNAL, which may also make memory allocations in main memory. This amounts to one "big" process, and one "small" process.

Both processes share a single Event Loop. If an event affects the utility, the utility may respond by executing some of its code. And same with the application. This simple "multitasking" is in a sense cooperative, because if either process goes into a long loop, the other will be deprived of execution time. Hence, they must be written to cooperate, to share the CPU, the disks, the screen, and even the mouse and keyboard events. An uncooperative Utility, for example, since it gets event priority over the application, can capture all events and refuse to ever propagate any to the application. It can also refuse to quit, and effectively take the system hostage.

But before you think that sounds bad, there is a positive side. The overhead in managing these processes is paper thin. And so the maximum amount of memory and resources are available to actually making the apps and utilities do useful things.

In addition to being event driven, where a user-generated event can be handled to spin off a short behaviour in either of the two processes, there are also timers. Timers allow either the system code, or a Utility, or the Application, to schedule the recurrence of some routine. With a bit of extra effort, a large or long running task can be divided into a number of small steps. Then a timer can be used to allow those steps to run, with small breaks in between to allow the rest of the system to process events.

Let me give you a simple example.

I recently wrote the Memory Info Utility, which I've been tweeting about:

Added radio buttons for varying the rate of updating. Fast is set to 2secs, slow is 8secs. Summary components can be clicked to show that value with full label. #c64 pic.twitter.com/msWljTZA2b

— Gregorio Naçu (@gregnacu) July 19, 2018

The Memory Info Utility displays a live visual map of how memory is allocated. But, as the system and the Application allocate new memory and free old memory, the Memory Utility needs to update its display. It starts a timer when it first launches (and cancels that timer when it quits) to call its memupdate routine. The timer can be configured, during runtime, to run either every 2 seconds (fast), or every 8 seconds (slow).

As long as the Application is cooperating, and not itself stealing all the CPU time, then every 2 (or 8) seconds, the main event loop will allow that routine of the Utility to run. From the user's perspective, he or she simply uses the main application to do whatever it does, and meanwhile the Utility (which is wholly separate, not written as part of the Application) is also running, is sharing the screen with the Application, and is visually updating itself more or less in the background.

So, that's cooperation. And I include it in this post, because it is relevant to the idea of command and control.

Happy weekend, and enjoy your C64!

- Read more about this in this article at pagetable.com. [↩]

-

If you're interested in how this works, exactly, you can read my detailed post:

How the C64 Keyboard Works. [↩]

Do you like what you see?

You've just read one of my high-quality, long-form, weblog posts, for free! First, thank you for your interest, it makes producing this content feel worthwhile. I love to hear your input and feedback in the forums below. And I do my best to answer every question.

I'm creating C64 OS and documenting my progress along the way, to give something to you and contribute to the Commodore community. Please consider purchasing one of the items I am currently offering or making a small donation, to help me continue to bring you updates, in-depth technical discussions and programming reference. Your generous support is greatly appreciated.

Greg Naçu — C64OS.com