NEWS, EDITORIALS, REFERENCE

Floating Point Math from BASIC (2/2)

This is Part 2 of a two part post on BASIC and floating point math. Originally I wanted to talk only about math and floating point numbers. However, because of the integration of floats into BASIC, I wanted to give a quick overview of how BASIC stores variables and manages memory. This prelude ended up being kind of long, so I split the post into two parts. If you haven't read part one yet, I recommend you read that first.

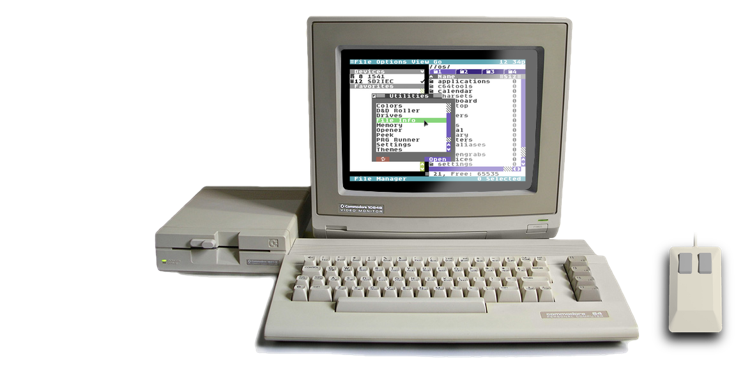

My interest in floating point math is because I want to use it in C64 OS. But it could just as easily be applied to any machine language program on the C64, which doesn't use BASIC but does not want to reimplement the concepts and the routines of floating point math. The first use case I have in mind is for a Scientific Calculator Utility.

Here's a zany mock-up of how that could look.C64 OS extends the KERNAL, it doesn't completely replace it. While some routines of the KERNAL are never called, others are absolutely depended upon. Despite this dependence, the KERNAL ROM can at times be mapped out, so C64 OS can make use of the RAM underneath. It uses this RAM either for Utilities or alternatively for bitmap data. There are rules to follow when mapping out the KERNAL so things continue to run smoothly. The same is true for use of the BASIC ROM in C64 OS, but with a few important differences.

Any operating system, or really any program, needs to decide how it will deal with the overlapping of ROMs and I/O with potentially usable RAM. As discussed in part one of this post, the standard C64 OS (KERNAL ROM and BASIC combined) "deal" with this overlap by ignoring a huge portion of RAM. 24K of 64K is essentially just never used. The system modules of C64 OS itself take up precious RAM, therefore we want to have available as much RAM as is possible to use.

The PLA, controlled by the 6510's processor port, handles mapping the ROMs and I/O overtop of the underlying RAM's addressing space. Only certain mapping combinations are permitted. I've frankly always found this confusing, but find it much clearer when all the possible combinations are laid out in a table, such as this one:

From Advanced Machine Language Book for the Commodore 64 by Abacus, Pg.133.

As you can see, I/O can be mapped in without the KERNAL or BASIC ROMs, with bit pattern 101. I/O and the KERNAL together can be mapped in without the BASIC ROM, with bit pattern 110. But the BASIC ROM can never be mapped in without the KERNAL because, as was recently pointed out to me on Twitter, the BASIC codebase is bigger than the 8K BASIC ROM. So BASIC actually spans across both chips. The 8K from $A000 to $BFFF and then continues for another 1.25K at the bottom of the KERNAL ROM from $E000 to $E500. Therefore, it would make no sense to ever have the BASIC ROM mapped in but the KERNAL ROM mapped out. Not only would it lop off the end of the BASIC codebase, but BASIC also makes frequent and unchecked use of KERNAL calls.

BASIC is $A000-$BFFF and $E000-$E500. BASIC only calls KERNAL jump table calls, what you are seeing must be calls into BASIC’s $E000-$E500 area.

— @pagetable@mastodon.social (@pagetable) October 23, 2018

I mentioned in part one, although the Character ROM can be mapped in with the KERNAL and BASIC, you can only do this very carefully. For example, if you don't mask interrupts first, the moment an IRQ occurs while the Character ROM is mapped in, you can pretty much expect a crash. "Careful" is the most important word when it comes to using RAM that is under BASIC, I/O or the KERNAL.

Here are the essential rules about how this works in C64 OS:

- The KERNAL ROM and I/O are mapped in by default.

- The BASIC ROM is mapped out by default.

- The KERNAL and I/O may be mapped out, within a routine, but must be mapped in before returning.

- The BASIC ROM may be mapped in, within a routine, but must be mapped out before returning.

- IRQs do not need to be masked.

- The IRQ handler maps the KERNAL and I/O in and BASIC out automatically,

and restores the interrupted mapping before returning.

- The KERNAL and I/O must be mapped in before calling a C64 OS KERNAL routine that depends on the KERNAL ROM.

- BASIC must be mapped out before calling a C64 OS KERNAL routine that depends on any code

that resides beneath the BASIC ROM.

- All memory under I/O is utilized and managed by C64 OS automatically.

- You should have no reason to ever map in the Character ROM.

That may look a bit complicated at first. I've never actually formulated it into a set of rules before. There are essentially three contentious regions: BASIC ROM, I/O and KERNAL ROM. The memory under I/O is fully utilized by C64 OS, so you cannot make practical use of it, therefore you don't need to worry about how/when to access it. And C64 OS uses a custom character set already, so you shouldn't ever need to map in the Character ROM.

Primarily, the ram under the BASIC ROM is used by the C64 OS KERNAL, and none of those KERNAL routines make use of the BASIC ROM itself. Therefore, during your application's runtime any routine that needs the BASIC ROM may patch it in, make the call, and then patch it out again before returning. You never need to worry about masking IRQs. They can fire while in any memory configuration and nothing bad will happen.

Similarly, you can always assume at the start of your code that BASIC is mapped out, and the KERNAL and I/O are mapped in. Why, because that's the state to which everything in the system must repliably default. If some routine maps out the KERNAL and then returns, the system is likely to crash, and this should be considered a bug in that application.

There are, generally, 2 modes that C64 OS will be in. KERNAL and I/O or all RAM. These correspond to the bit patterns (see the table above): 110 and 100. The system provides two routines that are stored in workspace memory, so they're always available.

- SEEKERIO

- SEERAM

Remember that the systemwide default is to see the KERNAL and I/O. Because this bit pattern is 110, and the pattern for including basic is 111, from the default state you can very easily patch BASIC in simply by incrementing the processor port, (INC $01). At the end of your routine, before returning, make sure to patch BASIC back out by decrementing the processor port, (DEC $01). There are macros for this, to improve clarity of intention.

Scientific Notation

The general idea of a floating point number is to trade in precision for range. We naturally do something just like this, all the time. When we refer to events from the distant past, for example, we say things like, the dinosaurs went extinct 65 million years ago. Or the Big Bang occurred 13.8 billion years ago. Those are very big numbers, yet we can say them in a breath. The reason is because we leave the least significant digits unspoken, and we use a keyword like thousand, million, billion, trillion, etc. to indicate where the decimal should be. No one actually cares (or knows) if dinosaurs went extinct 65,104,893 years ago, or if it was 65,104,882 years ago. When you're in the ball park of a number so big, the least significant numbers become almost entirely irrelevant. Plus, it's hard to remember and a mouthful to say: sixty five million, one hundred and four thousand, eight hundred and eighty two.

Note also the size difference between saying, Jesus lived "2 thousand" years ago, the dinosaurs died "65 million" years ago, and the universe exploded "13.8 billion" years ago. The difference in years between these epochs is many orders of magnitude, and yet they are written with a similar number of letters, and spoken with a similar number of syllables. This is the essence of scientific notation, which works pretty much the same way, but with a more formal structure.

Rather than arbitrary english words, like million and billion, which mean 6 decimal places and 9 decimal places to the right, respectively, scientific notation replaces these words with * 10x, where 10 is the base and the exponent X is the number of decimal places. The exponent can be negative or positive depending on whether the decimal should be slid left or right.

The number which precedes the base is called the mantissa1, and is the number which holds the precision. Take the dinosaurs, for example. They died 65 million, or 65 * 106 years ago. But if we want to make the number more precise, we do that by making the mantissa more precise using a decimal component. We could just as easily say 65.104 * 106.

To make arithmetic feasible between two numbers in this format, however, they need to be normalized. Normalized means that the mantissa falls within the range of at least 1 to less than 10. It's super easy to do this, you just slide the decimal left or right and account for that by incrementing or decrementing the exponent.

65 million becomes 6.5 * 107 years ago.

And the universe is 1.38 * 1010 years old.

Floating Point Numbers

A floating point number on a computer is, more or less, scientific notation in binary.

Scientific notation is expressed in base-10. Setting the base, in the expression, to 10 has the effect that changing the exponent slides the decimal point one neat column at a time. To represent this in binary, though, can be a bit of a head scratcher.

First of all, we can't refer to the point as the "decimal point", it is referred to instead as the "binary point." And the values in each column can only be 1 or 0. Here's how the place values before and after the binary point work, compared to how they work in decimal.

In decimal, each successive place to the right of the decimal point is one over the mirrored column value to the left of the one's column. 1 over 10, 1 over 100, 1 over 1000 etc. The same is true in binary. Each place to the right of the binary point is just one over the mirrored column value to the left of the one's column. In this case, 1 over 2, 1 over 4, 1 over 8, etc.

Let's say we want to represent the number 2.510 (The subscript indicates the base), in binary. That would be 10.12. Makes sense. Everything to the left of the binary point is just like regular binary. A one in the two's column and a zero in the one's column. But to get 0.5, we need a one in the one over two's column. Because, that's a half.

But, now we need to normalize. Remember, in normalized scientific notation, there can be only one "column" to the left of the decimal point. In decimal, a single column, a single digit, can be from 0 to 9. But it can't be zero, because if it were zero, you could continue to normalize by sliding the decimal another place until the single left column is not zero, and adjusting the exponent accordingly. For example:

0.123 * 102 can just be normalized to 1.23 * 101

In binary then, we need to slide the binary point until there is only one column to the left of the point. There is an oddity here though in binary. The value to the left of the point has to fall between 1 and the largest digit in the base. But, in binary, 1 is the largest digit in the base. So, the digit to the left of the binary point is always 1. Here's what we get:

10.1 * 100 gets normalized to 1.01 * 101 (Note: that * 10 is a binary 10).

In whatever system you're using, the base is always the same. In scientific notation the base is always 10. In floating point numbers represented in binary on a computer, the base is always 2. Because this number never changes, it is not necessary to store it along with every stored floating pointer number. We only need to store the parts that vary: the mantissa and the exponent. It is also necessary to store the sign of the mantissa, which is needed to support negative numbers. But we'll return to that later.

On a C64, the BASIC ROM's implementation of floating point representation is not exactly the same as the standard IEEE 754 specification. But, who really cares about the standard? We're on our C64s, our code doesn't have to exchange floats with PC software, we can just happily live on in our little bubble. If you really want to know how floats are structured on PCs or Macs, you could look up IEEE 754 and its later revisions.

The C64 uses 5 bytes to store a floating point number. Remember that from part one? Regular BASIC variables are 7 bytes. 2 bytes for the variable name/type, and 5 bytes left over to store the float value. So, how are these 5 bytes structured? Like this:

By convention, the first byte is the exponent. The exponent is a signed 8-bit value, however it is not signed using 2's Complement. For some technical reason that I don't understand, it makes the math easier to not use 2's Complement. To compute the signed value of the exponent, you subtract 129 from its unsigned value.

Here's how that works:

$FF → 255 - 129 = 126 $81 → 129 - 129 = 0 $80 → 128 - 129 = -1 $01 → 1 - 129 = -128 $00 → Special: The whole float value = 0

There is just one exception. If the exponent starts as $00, then, rather than subtracting 129 to arrive at an exponent of -129, instead, the final value of the whole float is zero, regardless of what is in the mantissa. For a computed exponent of 0, that's $81, or 129, which when you subtract 129 is zero. So the overall range of the exponent is from 126 to -128.

The following 4 bytes, or 32 bits, is the mantissa. But it's structured in fractional binary, with the point normalized to be just following the leftmost bit. Note also, that the 6502 is a little endian processor. This means that pointers are structured with the low or least significant byte first. Not so with floats. On the C64 the mantissa of a float is big endian. The most significant of the 4 bytes is furthest left, or lowest in memory. A diagram for comparison is necessary.

I must be a visual thinker, because I really like seeing tables and diagrams that lay everything out bare. You can see in the image above that not only are the values of the bits different, but the order of the bytes is reversed.

How to structure a float then?

Manually converting a regular decimal number into the binary floating point format is actually a remarkably laborious process.

Let's pick a number, like: 138.375

We can convert the whole number part first, just like normal. It's 138 = 128 + 8 + 2, or 10001010. But the fractional part is more complicated. First, we take the bit for 1/2, that's 0.5. Unfortunately 0.5 is bigger than 0.375, so this bit must be 0. Then we take the next bit for 1/4, that's 0.25. That fits in 0.375, so this bit must be set, and the remainder calculated. So, 0.375 - 0.25 is 0.125. The remainder is greater than zero, so we move to the next bit for 1/8 which is 0.125. This fits, so this bit must be set. Calculate the remainder again, it's zero, so we can stop.

The final result then is that 138.37510 = 10001010.0112

That binary number isn't normalized though, because the binary point is somewhere in the middle. The exponent begins at 0, and increments once for each place we slide the point left.

10001010.011 x 100 → 1000101.0011 x 101 → 100010.10011 x 102 → 10001.010011 x 103 → 1000.1010011 x 104 → 100.01010011 x 105 → 10.001010011 x 106 → 1.0001010011 x 107

UPDATE: November 30, 2022

Thank you to Brian Bray for pointing out a mistake in the above table and subsequent result. It's been corrected.

Now, you'll recall that the base doesn't need to be stored, because it never changes. And we need to convert the exponent 7 into that special offset-signed exponent format.

7 + 129 = 136 → $88 → 10001000

Now we put the exponent as the first byte, followed by the normalized mantissa, to get this:

10001000 10001010 01100000 00000000 00000000 exp mantissa ... padded out to the end

So there you have it. But wait, something's missing. How is the sign of this number stored? The sign of the exponent is built into the structure of the 8-bit exponent, but that only determines whether the decimal should be shifted left or right. If it shifts right the number gets bigger than 1. If it shifts left, the number gets smaller than one, but it'll still be bigger than zero. We need an additional bit to indicate whether this entire number should be negative or positive.

Recall that in scientific notation, when normalized, the single digit to the left of the decimal point must fall within the range of 1 to 9, or 1 to the base-1. But the strange effect of binary only having two possible digits, 0 or 1, means that the single digit to the left of the binary point, when normalized, must fall within 1 to base-1, or 1 to 2-1, or... 1 to 1! The leftmost bit is ALWAYS 1. But remember, there is no use storing anything that doesn't vary, that fixed leftmost bit can just be reconstructed in the math routines. This leaves that bit unused.

C64's BASIC, therefore, uses the leftmost bit of the mantissa as a negative sign. But since our number, 138.375 is positive, then the real final representation is like this:

10001000 00001010 01100000 00000000 00000000 exp +mantissa ... padded out to the end

Where BASIC meets Machine Code

It is a bit confusing exactly where BASIC begins and where machine code starts. After all, the BASIC interpreter is written in 6502 assembly.

When you enter your BASIC program, line by line, each line is parsed and BASIC keywords converted to their tokens, and memory and the pointers between lines are moved and updated right away. When you run your program, BASIC maintains an execution pointer and steps along the line looking for one of very few possible valid options. Initially it's looking for either a token to execute, or a variable assignment. If it finds something unexpected, it's a syntax error.

If it finds something that looks like a variable name (starts with a letter) then immediately after the variable name it's expecting an equals token. If it doesn't find one, syntax error. As BASIC executes, it uses that pointer to walk through the code one byte, one character, at a time. When it finds a token, the token is used to index a table of pointers to machine language routines that implement the behavior of the command. The implementation expects a certain set of parameters to follow, which it parses out of the line following itself by advancing the execution pointer. A lot of power comes from the ability for these parameters to be nested expressions.

Often, where a number is expected, one can put an expression that will resolve to a number. Or where a string is expected, an expression that results in a string. Although, not always, it depends on the implementation of the command. The most notable, and for me perplexing, are the limitations of GOTO. GOTO can only take a whole number. And it can't take a numeric variable, nor an expression that will return a number. But, as a strange side effect, you can put anything after the number and no syntax error results, because the implementation of GOTO takes the execution away from the line before it ever has a chance to discover that there is sheer nonsense following the number. Check this out:

Arithmetic operations, like +, -, *, /, and ↑, as well as >, =, and <, all get tokenized and each has its own machine code implementation to do its thing. Parameters for an arithmetic operation can be numeric variables, or they can be inline constants. Such as these examples:

- A = B / C

- A = B / 10

- A = 5 / 10

In either case, the equals token is looked up and its machine code runs. And the division token is looked up and its machine code is run.

Now, if something like a division is operating on two variables, then BASIC needs to find the variables, so it has to go through a variable look up process. This was covered in part one of this post. It starts at the pointer to where variables start, and searches through skipping 7 bytes at a time looking for a matching variable name. When it finds it, it has found where the number is stored, as a 5 byte float. These 5 bytes then need to be copied somewhere (we'll get to that in a minute). The division operation then has to lookup its second variable. This goes through the variable search process again, and when found that 5 byte float is copied to somewhere so that the division operation can be computed upon the two floats. One wonders why BASIC is slow? Well, even though the division operation is written in machine code, searching for those variables takes time.

But, what happens when instead of two variable names, the division operation uses two numeric constants instead? As we saw, also in part one, how a BASIC line is parsed and tokenized, only the keywords (and arithmetic symbols and functions) are converted to tokens. Variable names are left as strings of PETSCII… and so are numeric constants. Remember that GOTO 100, the 100 is left as the characters "1","0","0" ($31,$30,$30). What this means is that, when the division operation (or another operation expecting a number) reads in its arguments, it has to convert a PETSCII string representation of the number into the float format (or into an INT format, depending on the operation.) Conversion from string to number is notoriously slow. So I came up with a quick example to show just how brutal it can be.

The two examples, in their limited scope, do exactly the same thing. They loop 500 times, adding the result of a division to the total on each iteration. In the first example, the division is the result of two variables, A/B. Is it slow? Yes it's quite slow. The interpreter divides A by B, 500 times over, even though the result is always the same. And on each iteration A and B have to be looked up and their values have to be copied from variable space to math space. It's damn slow. It takes almost 5 seconds.

But now look at the second example. Instead of dividing two variables, it's a division of two inlined floating point constants. The problem is that these constants are in PETSCII string format. And it takes way longer to parse them into floats than it does to look up a variable that is already in the float format. And, in this example, both strings have to be parsed into floats all over again on every iteration. The final result is exactly the same, but it takes a staggering 35.7 seconds!

Of all the code in the BASIC rom, what exactly is BASIC is kinda fuzzy. BASIC is some hairy complex conglomerate of a line-by-line parser, a simple memory manager, a variable storage and lookup system, an interpreting execution runtime, and a library of string and math routines.

But what happens if you just call one of the math routines alone?

Once two floating point formatted numbers are loaded into the math regions of memory, performing an arithmetic routine on them is 100% machine code, with no interpretation. It is as fast as it can be. (Assuming it is. After all, everything can be optimized.)

Now, it's still slow to work on floating point numbers, because the 6502 has no native support for working with the format. But then on the other hand, the 6502 doesn't have support for multiplying or dividing INTs either. Working directly with floats, using the BASIC math routines, is slow. BUT, it is not slow in the same way or for the same reason that BASIC programs in general are slow. The floating point math routines don't care how the floats get used, or where they get stored, or how and when they are converted to and from strings. These are all things that "BASIC" manages, that we can completely ignore, or rather, reimplement.

Floating Point Operations

First a little review of how the 6502 performs regular integer arithmetic.

The 6502 has 3 registers, the accumulator, plus the X and Y index registers. It has instructions to copy a byte from memory to a register or back. It has instructions for moving a byte between the accumulator and the index registers. It also has a number of arithmetic and arithmetic-like instructions. For example, the index registers can be incremented or decremented. This is essentially adding or subtracting one. There are also instructions for shifting the accumulator left and right. These are essentially multiplying and dividing by 2. These operations can also be performed directly on a memory address, at some cost of time. They all, however, have only one operand. INC $FF, for example, adds 1 to the contents of $FF, but the "1" is implied. LSR $FF, for another example, divides the byte stored at $FF by 2, but the "2" is implied.

There are also a number of arithmetic instructions that require 2 operands. Add with Carry (ADC), Subtract with Carry (SBC), in addition to three bitwise instructions. All of these are implemented by the CPU's arithmetic logic unit, or ALU. These 2-operand instructions can only be used in intimate conjunction with the accumulator. For example, there is no instruction and no mode of any instruction that will take as arguments two arbitrary memory addresses, combine their contents together in some arthimetic way and then write the result back to one of those two locations. They must use the contents of the accumulator as one of the operands, in addition to one other arbitrary operand.

Therefore, to add to the contents of $FE to the contents of $FF, you must do either the following:

Or, the following, which is only subtly different:

In both cases, the contents of one memory address are copied into the accumulator. Then the contents of a memory address are added to the contents of the accumulator, and the result is put into the accumulator, overwriting the original operand. The accumulator can then be written back to memory. Every 2-operand instruction works the same way. One operand must already be in the accumulator and the result of the operation gets written to the accumulator. If you're smart, and you have multiple operations to perform, you can leave the result from the previous operation in the accumulator as one of the operands for the next operation. That would be much faster than writing it to memory, only to read it right back in again.

Floating point arithmetic on the C64 works along a very similar principle. There is a floating point accumulator, and there are routines to move data into and out of it, MEMFAC and FACMEM. One copies a floating point number from memory into the floating point accumulator. The other copies the contents of the floating point accumulator to somewhere in memory. There is a set of primitive arithmetic operations: add, subtract, multiply, divide and power. In each case, you call the routine with a pointer in the A and Y registers2 to the second operand. The operand pointed to is combined arithmetically with the contents of the floating point accumulator (FAC for short) and the result is written into the FAC.

In its most simple form, this is all you need to know to start using floating point math. If you wanted to write a simple calculator, for instance, you could start by putting constants to all the numbers 0 through 9 into your code. And then use these functions to move the constants and a running total in and out of the FAC as the user interacts with the calculator UI.

Here's how we can compute the constants for 0 to 9. (Some assemblers and some monitors have native support for conversion to the C64's float format, with a pseudo op-code, .flp.)

0) 0.000 0000 0 (special) 1) 1.000 0000 0 2) 10.00 0000 1 3) 11.00 0000 1 4) 100.0 0000 2 5) 101.0 0000 2 6) 110.0 0000 2 7) 111.0 0000 2 8) 1000. 0000 3 9) 1001. 0000 3

The part left of the decimal is your regular binary for the values 1 through 9. The extra digit at the end is how far we need to slide the decimal point. Need to add 129 to each of these to get the correct exponent offset.

0) 0 00000000 00000000 00000000 00000000 (special) 1) 129 10000000 00000000 00000000 00000000 2) 130 10000000 00000000 00000000 00000000 3) 130 11000000 00000000 00000000 00000000 4) 131 10000000 00000000 00000000 00000000 5) 131 10100000 00000000 00000000 00000000 6) 131 11000000 00000000 00000000 00000000 7) 131 11100000 00000000 00000000 00000000 8) 132 10000000 00000000 00000000 00000000 9) 132 10010000 00000000 00000000 00000000

Now I've stripped out the decimal marker, offset the exponent and moved it to the front, and added the extra bytes of precision, which are all zero for these nice simple round numbers. Note how the left most bit of every first precision byte is 1. These all need to be set to zero to make these numbers positive.

0) 0 00000000 00000000 00000000 00000000 (special) 1) 129 00000000 00000000 00000000 00000000 2) 130 00000000 00000000 00000000 00000000 3) 130 01000000 00000000 00000000 00000000 4) 131 00000000 00000000 00000000 00000000 5) 131 00100000 00000000 00000000 00000000 6) 131 01000000 00000000 00000000 00000000 7) 131 01100000 00000000 00000000 00000000 8) 132 00000000 00000000 00000000 00000000 9) 132 00010000 00000000 00000000 00000000

And lastly, let's convert all of these to a common hexadecimal format, for easy input into our source code.

There you have the constants for simple starting digits. You could paste above into your source code and begin using them to perform simple arithmetic. First though, you'd need to know the addresses of the routines. Here they are:

| Routine | Address | Operation |

|---|---|---|

| MEMFAC | $BBA2 | A/Y -> FAC |

| FACMEM | $BBD4 | FAC -> X/Y |

| PLUS | $B867 | A/Y + FAC -> FAC |

| MINUS | $B850 | A/Y - FAC -> FAC |

| MULT | $BA28 | A/Y * FAC -> FAC |

| DIVID | $BB0F | A/Y ÷ FAC -> FAC |

In each of these cases, A/Y is a pointer (A = Low Byte, Y = High Byte), to a floating point number in memory. Except for FACMEM, in this case, the FAC is written to memory pointed to by X/Y (X = Low Byte, Y = High Byte).

UPDATE: August 30, 2021

The registers used for the pointer for FACMEM were incorrectly stated as A/Y, when they are actually X/Y. Thanks to Mark Reed for the heads up.

As long as you don't need to convert from floating point into some other format, this is actually all you need to know. Floats in their 5 byte format can be written to disk, say to an application's config file. And they can be read from disk into memory, simply by reading and writing 5 bytes. Floats can be embedded in the middle of a struct, by nothing more complicated than just allotting 5 bytes.

In the simplest use case, there is no need to know where the FAC is, or what it is composed of. These routines handle everything for you. Here's a simple example of how to multiply two numbers together and get a back result.

First we include a floats header file. In this file we'll put the equates to each of the floating point functions listed in the table above, for easy access.

Next, we have a macro to set an A/Y pointer to the appropriate floating point number, based on the supplied index 0 through 9. This effectively lets us "convert" a value from 0 to 9 to a floating point number. The table of floating point numbers is in the floats.s file included at the very end of this code.

Use the macro to grab a pointer to "2" then call memfac to put the float 2 into the FAC. Use the macro again to grab a pointer to "9". Then call the divide routine. The result of 9/2 ends up in the FAC. Set a pointer to our "total" label, which is just 5 reserved bytes in memory, and call facmem to copy the fac to total. And that's it! We should now have the float representation of 4.5 stored in the memory at total.

Inside Floating Point Operations

Presumably, though I'm sure someone will let me know if I'm wrong, there is a hidden register inside the 6502 which temporarily holds the non-accumulator operand.3 Let's say we load a value into the accumulator with LDA. Then we execute an ADC. The processor probably loads the argument of ADC into a temporary internal register, and simultaneously transfers the accumulator into another temporary internal register. Both of these registers then, probably, feed through the ALU, and the output of the ALU gets written back into the accumulator.

If this indeed is the general flow of information inside the 6502, then floating point operations work similarly to this as well. There is another set of addresses just following the FAC called ARG (for argument.) When you call an arithmetic operation with a pointer to a float, the first thing it does is copies the float, pointed to in memory, to ARG. Then it assumes FAC is already prepared, and does its work arithmetically combining the values stored in those two areas.

The main difference between the two, is that "the instruction" is the lowest level of access we have to the 6502's internal states. If there is no instruction that gives us access to read or write its internal registers, then they are truly private. However, with the floating point routines, the FAC and ARG are just arbitrarily chosen memory addresses somewhere in zero page. Which means we can read and write to any individual bytes of FAC or of ARG if we know where they are. In fact, there are routines specifically for working directly with ARG.

| Routine | Address | Operation |

|---|---|---|

| MEMARG | $BA8C | A/Y -> ARG |

| ARGFAC | $BBFC | ARG -> FAC |

| FACARG | $BC0C | FAC -> ARG |

These routines, as you can guess, allow you to load ARG directly with a float from memory. Additionally, you can transfer the contents of ARG into FAC, or the contents of FAC into ARG. The question is, why would you ever want to do this?

Remember, calling any of the arithmetic operations listed above, with an A/Y pointer, the first thing that happens is the float pointed to by A/Y gets copied to ARG, which is done in the first 3 bytes, by a JSR MEMARG. Then the routine continues by operating directly on the contents of FAC and ARG. If, however, you want to call an operation multiple times with the same value for ARG, it would be much faster to leave the contents of ARG unchanged, and jump to the part of the routine just after memory gets copied into ARG. The whole set of routines, then, can be regarded as having a second mode, that simply operates on ARG and FAC directly, rather than memory and FAC.

| Routine | Address | Operation |

|---|---|---|

| PLUS_ARG | $B86A (PLUS + 3) | ARG + FAC -> FAC |

| MINUS_ARG | $B853 (MINUS + 3) | ARG - FAC -> FAC |

| MULT_ARG | $BA2B (MULT + 3) | ARG * FAC -> FAC |

| DIVID_ARG | $BB12 (DIVID + 3) | ARG ÷ FAC -> FAC |

There is a small gotcha when using these routines however. First, let's look at what happens when you call MEMFAC, FACMMEM or MEMARG.

A float that is stored in memory, which can be written to disk, or passed around by your program, is in the 5-byte format described earlier. This format, however, is a "packed" format. The sign bit being stored in the leftmost bit of mantissa 1 is a clever way to save memory and storage, especially when storing lots of floats. However, this packed format is not ideal for actually doing the arithmetic.

Therefore, MEMFAC and MEMARG do not merely copy 5 bytes from one place to another. They unpack the bytes into a more usable 6-byte format. The 6th byte is for the sign, and the leftmost bit of mantissa 1 gets restored to 1. FACMEM, then, does the reverse, packing the sign back into the leftmost bit of mantissa 1 before copying the bytes out to memory.

Here, finally, are the zero page addresses used for the unpacked format of FAC and ARG:

| FAC | ARG | |

|---|---|---|

| Exponent | $61 | $69 |

| Mantissa 1 | $62 | $6A |

| Mantissa 2 | $63 | $6B |

| Mantissa 3 | $64 | $6C |

| Mantissa 4 | $65 | $6D |

| Sign | $66 | $6E |

Now to explain the gotcha. The end of MEMARG loads the accumulator with the exponent byte of FAC. The rest of the arithmetic routines expect the accumulator to be set like this. Therefore, before calling any of the routines that operate directly on ARG and FAC, you must load the accumulator with FAC's exponent first. (LDA $61)

Here's another little trick, for use in C64 OS. We know that these zero page addresses only ever get used by the BASIC ROM when calling one of the floating point routines. At all other times, these addresses are safe to be used for other purposes. Bear in mind that while your application is running, a Utility could be loaded in at the same time, which itself might make use of floats. Knowing this, we can make temporary use of these addresses. We can't leave data in them for the lifetime of the application, because they could easily be corrupted by code from a Utility. Therefore, both from the perspective of a Utility or an Application, the space should be considered volatile, but available, any time the routine is not explicitly working with floats.

C64 OS has a math module. This module contains routines for 16-bit integer multiplication and division. Each of these routines requires temporary zero page space for the divisor, dividend, quotient and remainder. As well as for the multiplier, multiplicand and product. These routines use the ZP addresses of FAC for these temporary values.

Transcendental and Other Useful Functions

The BASIC ROM also provides a number of other floating point routines, such as a set of, so called, transcendental functions. Each of these functions works on the contents of the FAC, and replaces the FAC with the new result.

| Routine | Address | Operation |

|---|---|---|

| ATN (arctangent) | $E30E | ATN(FAC) -> FAC |

| COS (cosine) | $E264 | COS(FAC) -> FAC |

| SIN (sine) | $E26B | SIN(FAC) -> FAC |

| TAN (tangent) | $E2B4 | TAN(FAC) -> FAC |

There are yet still other useful functions:

| Routine | Address | Operation |

|---|---|---|

| EXP (power of e) | $BFED | EXP(FAC) -> FAC |

| INT (greatest integer) | $BCCC | INT(FAC) -> FAC |

| LOG (natural logarithm) | $B9EA | LOG(FAC) -> FAC |

| RND (random number) | $E097 | RND(FAC) -> FAC |

| SQR (square root) | $BF71 | SQR(FAC) -> FAC |

Leaving the Bubble: Conversion Routines

Now you know most of what you need to know to work with floating point numbers, in the floating point number format. However, sooner or later you're going to need to convert numbers into and out of the floating point format.

There are two general categories of conversion: The first is to and from string format. Converting to string would be used for displaying floating point numbers to the user, such as on the display of the scientific calculator. Converting from a string could be used to create a floating point number from user input, either by typing on the keyboard or clicking around on a calculator's UI buttons.

The second general category of conversion is to and from integers. Floating point numbers on the C64 have a fixed size. They are always signed, and always have 32-bits of precision, with an 8-bit exponent. Ints, on the other hand, come in a variety of types. Signed and unsigned, 8, 16, 24 and 32 bit. There are bigger ones, but the BASIC ROM only has routines for handling these. And even then, it only has routines for handling some of these, but the others can be obtained with a very small amount of support code.

Let's look first a string conversion.

There is a function in the BASIC ROM called STRFAC4, which, as you can imagine will parse a string containing a number into FAC as a floating point number. This routine depends on a routine that BASIC uses to walk through a line of BASIC code. The routine is in zero page and contains an inline text pointer that is incremented on each call, and returns the next character in the accumulator. You don't pass a pointer to STRFAC, you have to manually point the inline text pointer to your string before calling STRFAC.

This character fetching routine is called CHRGET, and it's a bit odd. It increments the pointer before reading the character. Therefore, if you set up the pointer to your string and just call CHRGET, it'll skip the first character! Furthermore, the STRFAC routine requires that the first character already be in the accumulator when it gets called. There are a couple of ways to meet these conditions. You can set the text pointer to one byte before where your string begins, then call CHRGET. This increments the pointer and loads the first character of your string into the accumulator. Then you can call STRFAC. Alternatively, you can set the pointer directly at the start of your string, and then jump into the CHRGET routine just beyond the point where it increments the text pointer. This alternative start point is lovingly referred to as "CHRGOT." Either works.

After calling STRFAC, your floating point number is waiting in FAC. The string format it parses is exactly what can be accepted by BASIC. You can optionally start with a plus or a minus. You can have up to one decimal point. And you can optionally use an "e" followed by a positive or negative number to use as an exponent. Also, spaces left in the string will be ignored. Which is nice, because you can use spaces as thousands separators. Here are some examples of valid string formats:

- +12

- -15.4

- 13.8e9

- 1 000 000

- 10e-5

- etc.

The string must be terminated by a null ($00) or a colon (:) or some other non-numerically valid character.

And here are the addresses for the routines and pointers:

| Routine | Address | Operation |

|---|---|---|

| TXTPTR | $7A/$7B | Pointer to string |

| CHRGET | $73 | INC TXTPTR. (TXTPTR) -> A |

| CHRGOT | $79 | (TXTPTR) -> A |

| STRFAC | $BCF3 | Float String -> FAC |

Next we want to take a floating point number and convert it to a string. This is easier than converting a string to a float. You simply call FACSTR, and it will convert the floating point number currently in FAC to a string in temporary memory. That temporary memory interesting overlaps the low bytes of the stack, a place where the stack rarely gets to, unless it's about to overflow. A string conversion of a C64 float never results in more than about 10 characters. In any case, a pointer to the start of the string is returned in A/Y.

| Routine | Address | Operation |

|---|---|---|

| FACSTR | $BDDD | FAC -> A/Y |

The second category of conversion then is converting to and from integers. The convention from C is that the default designation "int" is signed, and the unsigned versions are preceded by a "u" as in "uint". The BASIC ROM has routines to convert to float from int8, uint8, and int16. I'll show how to convert from uint16, but it requires a bit of prep.

int8LDA xx ;Signed 8-bit value JSR $BC3C ;Float result -> FACuint8

LDY xx ;Unsigned 8-bit value JSR $B3A2 ;Float result -> FACint16

LDY xx ;Signed 16-bit lo byte LDA xx ;Signed 16-bit hi byte JSR $B395 ;Float result -> FACuint16

LDY xx ;Signed 16-bit lo byte LDA xx ;Signed 16-bit hi byte STY $63 STA $62 LDX #$90 SEC JSR $BC49 ;Float result -> FAC

Conversion from int24, uint24, int32 and uint32 require similar but more preparation. I'll leave those to be looked up in Advanced Machine Language for the Commodore 64, which I'll be linking to at the end of this post.

Lastly, conversion from float to int. This is much simpler. The float must be in FAC, and the result is always converted to an int32 (signed 32-bit int, two's complement). The result is stored, big endian, in the FAC's mantissa. That is, the most significant byte in $62, the least significant byte in $65.

Before conversion, the fractional part is removed. And the remaining whole part must be able to fit within the range of a signed 32-bit int: −2,147,483,648 to 2,147,483,647. There is no range checking, you should check that the exponent is less than $A0, or less than 160. Why 160? Remember, the exponent is signed with an offset of 129. 159 − 129 is 30, which is the maximum number of places the binary point can be moved, and still remain within the signed 32 bit range.

Conclusion

There is a lot of detail that goes into how floating point numbers are structured and how they work. But as it turns out, you don't really need to worry about most of the details. Knowing the addresses of the routines, and their expected inputs, should be enough to get anyone up and running.

Everything I know about floating point math on the C64, I learned from the Advanced Machine Language book for the Commodore-64, published 1984 by Abacus Software. This book, in combination with a pencil and paper, a good base-converting scientific calculator, and the fully commented disassembly of the C64 BASIC and KERNAL ROMs.

The Advanced Machine Language Book for the Commodore-64, Abacus Software 1984.It is a wonderful book. And fortunately, for those of you who don't have a physical copy, the complete book is available at archive.org.

The Advanced Machine Language Book for the Commodore-64 (1984, Abacus Software)I hope I have not simply regurgitated what is already written in this book. The book goes into significantly more technical detail, and if you are interested in that, I highly recommend reading it.

I found it a bit challenging. Many of their descriptions take leaps that can at first leave you wondering how they got from here to there. After working through the text, understanding it, and writing test code to confirm my understanding, I've extracted out of it the bits I need to make the gamut of floating point features readily available to C64 OS. In writing this post I've left out some of the most technical bits, and tried to fill in some of the explanatory gaps the book left.

I hope someone finds this information useful.

- It's also called the significand, but I'll stick with the word mantissa, because that's how all the Commodore 64 documentation refers to it. [↩]

- In C64 OS, the standard is to use the X and Y registers to pass a pointer, low byte high byte respectively. However, BASIC tends to more frequently use A and Y for this. [↩]

- The processor's internal architecture is way above my head. But, when I look at a block diagram like this one I think, well maybe what I'm thinking about is something like that "B Input Register (BI)" that feeds into the ALU. The A Input Register, which also feeds into the ALU, is probably getting loaded from the accumulator, in operations like ADC and SBC, or EOR, AND, and ORA. [↩]

-

I'm not sure if these routines have "official" names. In the

fully commented

disassembly of the BASIC and KERNAL ROMs there are many comments, but no concise names for

the routines.

In Advanced Machine Language for the C64 names are given for the routines, but I don't like many of them. I find them inconsistent or confusing. So I've renamed/relabeled them for use in C64 OS source code and related documentation.

Ex. Rather than ASCFLOAT I call it STRFAC. The name convention is FROMTO. MEMARG copies from memory to arg. ARGFAC copies from ARG to FAC. Similarly then STRFAC converts a pointed to string to the FAC. [↩]

Do you like what you see?

You've just read one of my high-quality, long-form, weblog posts, for free! First, thank you for your interest, it makes producing this content feel worthwhile. I love to hear your input and feedback in the forums below. And I do my best to answer every question.

I'm creating C64 OS and documenting my progress along the way, to give something to you and contribute to the Commodore community. Please consider purchasing one of the items I am currently offering or making a small donation, to help me continue to bring you updates, in-depth technical discussions and programming reference. Your generous support is greatly appreciated.

Greg Naçu — C64OS.com