NEWS, EDITORIALS, REFERENCE

Base Conversion in 6502 (2/2)

Very close to a month ago I wrote an article called Base Conversion in 6502. That piece was a part 1 of 2 because I felt like it was getting a little long. In part 1, I went through the process of converting an ASCII string representation of a number to the actual integer equivalent.

This piece is Base Conversion in 6502, part 2. In this article I'm going to walk through the reverse procedure. How to convert an integer into a string representation of that number. In either direction the base of the representation is arbitrary. In the original I showed converting from a string representing a number in base10, but it would be just as easy to convert if the string held a number in base16, or base2, or base8 or whatever. Similarly, when we convert an integer into a string representation we can choose what base we want it to be converted to.

I feel like there was some confusion over my terminology in part 1, because I was refering to integers as numbers in hex, so I'd say, convert a string number to hex. When the CPU deals with integers, say to add them together, the integer is whatever its bits are counted in binary. So, if you have a byte in memory, and the byte's bits are 0000 0010, then we can say that as far as the CPU is concerned that's the integer 2. And it's super easy for the computer to add that to some other integer like 3 (0000 0011), to produce a one byte integer result 5 (0000 0101). If these numbers were in PETSCII, though, they would be 50 == "2" (0011 0010), 51 == "3" (0011 0011), and 53 == "5" (0011 0101), and so on.

The reason I used the words converting between a string and hex, is because in the assembler ints are most frequently typed in hex notation. And when assembled that number typed in hex is represented in the CPU as an integer.

Meanwhile, you can represent a number in binary, in a string! Where each bit is represented by a whole byte in the string, either "0" (48, 0011 0000), or "1" (49, 0011 0001). So, converting an integer like 5 (0000 0101) to a string representation in base2, it would be converted to the string "00000101" which is actually 8 bytes long, 48 48 48 48 48 49 48 49. Okay, here we go.

Convert an INT to a string representation

This is the opposite process. We have an int (an integer), but we want to print it out on the screen in characters that are readable by a human.

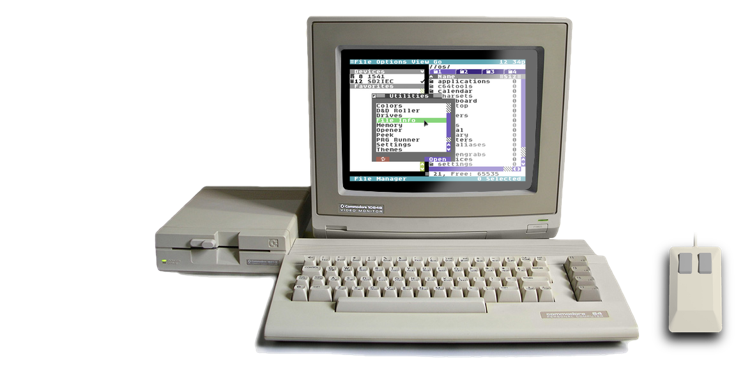

What would happen if you didn't bother to do a conversion and just dumped the byte directly to screen memory? Well, imagine your byte was the integer 83. If you dump that byte to the screen the VIC II will draw it as screen code 83, it'll draw a single character that looks like a heart. How would you then figure out what that byte really is? You'd have to refer to a screen codes chart, search through the chart for a heart and then see that the heart is 83, bingo.

What are we really doing when do this? Well, it's kinda like representing the number in base256. Base10 has 10 symbols (0,1,2,3,4,5,6,7,8 and 9), and if the integer is greater than 9 we need a second character to hold another of the 10 symbols. Base2 has 2 symbols (0 and 1), and if the int is greater than 1 we need another character for another either "0" or "1". Base256 would mean we need 256 unique symbols. And if the integer is greater than 255 then we'd need a new character with another one of our 256 symbols. But, that's what screen codes are; 256 unique symbols. A one byte integer requires exactly one screen code character.

But that would be terrible, it would take forever to figure out what the number is in a way that we understand it. And what's worse, many screen codes look very similar to each other. 116 and 117 are both left vertical bars, 117 is just a single pixel wider than 116. So, representing the number in a base256 string is not really workable. We need to convert to some smaller base.The easiest way to get something we could describe as human readable is to convert to a string that is in base16, or hexadecimal. The reason this is easiest is because the 8 bits of the underlying int break straight down the middle: 4 upper bits (the upper nybble), and 4 lower bits (the lower nybble). Each nybble is a number from 0 to 15 and can be used as an index into a lookup table of 16 PETSCII characters, "0123456789abcdefg".

I personally use this all the time for debug output when coding. I've got some ints that I'm working with and I need to see what those ints are, so, short of using a monitor which does these conversions for us, I just want to spit out some numbers to the screen.

In fact, I already gave the source for such a conversion routine, inttohex, in the post Implementing Factorial In 6502.

It's very very easy. By shifting right (LSR) four times, the upper four bits are shifted down into the lower nybble and the upper 4 places replaced with zeroes. This is now a number in the range 0 to 15, which can be used as a lookup index into a string of digits, labeled above as hexits.

And to get the lower 4 bits alone is even easier. You simply mask the whole byte using AND #%00001111. Some juggling is required to preserve the numbers as you go, but by and large it is simple, fast and super useful.

So, we're done! No, we're not.

Converting to base16 is really convenient for programmers who are debugging, but most people don't understand hexadecimal because they don't have 8 fingers per hand, like programmers do. Most people have only 5 fingers per hand (the thumb is a finger for this exercise.) So most people want to see the output in base10.

Convert an INT to a string in an arbitrary base

We return now to the article about Large Look-up Tables for Hyperfast, Accurate, 16-Bit Scaled-Integer Math by Garth Wilson over at Wilson Mines Co. The original place where I saw descriptions of the principles of base conversion. Here's his prose description of converting from an int to a string in an arbitrary base:

For converting hex numbers to other bases for output (which will normally be a string), initialize a blank string. You will build it from right to left. Divide your number by what's in variable BASE, and use the remainder to add a text digit to the build, even if it's a 0. Keep doing that until there's 0 in the number.

As before, I'll convert that to pseudo code for you.

Convert from INT to a different base: Define BASE. Define symbol table with at least BASE-n symbols. Initialize a blank string. loop: Divide number by BASE. Use remainder as index into symbol table. Prepend symbol to string. Go loop until number is zero.

Next let's step through the pseudo code with an example number, seeing how it converts one step at a time.

BASE = $A (10 in decimal) $A894 -------------------- loop 0: $A894 / BASE == $10DB (remainder $6) "6" + "" loop 1: $10DB / BASE == $1AF (remainder $5) "5" + "6" loop 2: $1AF / BASE == $2B (remainder $1) "1" + "56" loop 3: $2B / BASE == $4 (remainder $3) "3" + "156" loop 4: $4 / BASE == $0 (remainder $4) "4" + "3156" -------------------- "43156"

So before we proceed with an explanation, let's use our calculator and see if the conversion works. 43156 is indeed equal to $A894. That's convenient.

To start we define the variable BASE as $A, that's base 10, because we'd like to see our output string in the nice friendly base 10 we're all used to. But this would work just as well if we were to use some other base instead.

Between the two horizontal rules I've labelled 5 loop iterations, 0 through 4. It just works out that it takes this many steps to get our division result all the way down to zero.

The initial value is our dividend, and BASE is our divisor. After performing the division we get a result and a remainder. That remainder, as it happens, even if it is zero, is the least significant digit of the number in the base we're converting to. In this case, because we're converting to base10, the range of remainders even represented in hexadecimal will only ever be between $0 and $9.

The remainder has to be converted to a PETSCII character. This isn't shown here, but it's very easy. We can see by looking at a PETSCII table that $30 is "0", $31 is "1", $32 is "2" and so on. So to get the PETSCII character version of our remainder integer all we have to do is add $30. The character has to be added to the start of the string. In our implementation we'll take a look at how I might get around to doing that.

As long the result is not equal to zero we need to loop and continue the process. On each new loop, the result from the previous division becomes the dividend for the next division. In the final loop the dividend ($4) is smaller than BASE ($A) so the result is 0 plus the remainder which will be the final (most significant) digit in the string.

When we converted the other way, our implementation need to have a multiplication routine because the 6502 doesn't have a multiply instruction. Converting this direction, our real implementation will need a division routine. We will also need to worry about precision. In this case, as in the example in part 1, I'm opting to settle for 16-bit precision. So our maximum input number is $FFFF and the maximum string representation will be "65535".

Codebase64 to the rescue on the 16-bit division routine.

INT to a String number Implementation

And here is my implementation of the above. Works just as you'd hope.

Now let's walk through this a line at a time and see how it works.

The division routine that I grabbed from Codebase64 requires three 16-bit numbers in zero page. These are the first constants defined under Workspace. You only need to specify the low byte of these addresses. We have one for divisor, one for dividend and one for remainder. The result in this routine actually is the same address as dividend, which means dividend will continually be overwritten by result. This turns out to be super handy for our use case, as we'll see shortly.

Base is defined as 10, petoffset is the number that needs to be added to an int between 0 and 9 to produce the PETSCII characters "0" through "9". I make this a constant, because I hate magic numbers being sprinkled through code. And chrout is defined here as $FFD2, which is the KERNAL address for outputing a PETSCII character to the screen.

The code starts at $0801, that's where BASIC programs start. The first code encountered is the BASIC preamble. In my post Implementing Factorial on 6502 I talk a bit about how the preamble works. But here'd like to say that rather than just spitting out a series of inscrutable bytes, the assembler actually offers a variety of features that make producing the preamble from memory quite a simple task.

The preamble is structured as a BASIC program. First a 2-byte pointer to the next line, this can be done with .word and a label, "end" in this case. Next is a 2-byte line number. This is easy to do with .word 10, that makes a 16-bit line number 10. Next we have a 1-byte BASIC token, this is the hardest part to remember, .byte $9e, that's the token for SYS. Next the SYS wants a memory address, encoded in PETSCII (ironic considering the nature of this article series), plus the BASIC line ends with a null byte $00. This can be very easily produced by the assembler with the .null keyword. The following quoted string is in PETSCII, and it automatically gets null terminated. To end the BASIC program we want a 16-bit $0000, this is easily produced with .word $00, and this is also the line that is pointed to by the first line's next line pointer. So it takes the "end" label. And boom, that's easy to type up from scratch, and is even comprehensible to read.

The main program starts at line 24. We start by putting our BASE into the divisor. This will not change, so it only has to be done once. Even though the divisor fits within 8-bits, the assembler notation of #> and #< will break it into $00 and $0A for us.

Next we put the number we want to convert into the dividend the same way we populated divisor. I've hardcoded $A894, but in a real program you'd probably be pulling this from somewhere.

The loop starts at line 36. The first thing it does is checks to see if dividend is 0. If it is, we're done and we can leave the loop to output our converted string. Dividend is a 16-bit number, but checking to see if it's zero can most easily be accomplished by loading one of its bytes, and then ORing that with the other byte. If the result of both bytes ORed together is still zero, then the whole dividend is zero, and the conversion is complete.

At line 40 we JSR to divid16. This routine overwrites the dividend with the result of the division, as well as populating the remainder, even if the remainder turns out to be zero. Because we're dividing by such a small number, $0A, remainder cannot ever be bigger than a value from $00 to $09, which also means that remainder's high byte will always be $00 and can be ignored.

Lines 42,43 and 44 load the remainder's low byte and add the PETSCII offset to it. Lines 46, 47 and 48 put the PETSCII character into the string. The string, str, is a pre-defined buffer that starts as 5 spaces, null terminated, at line 64. Line 63 is an index variable that starts at 4. That's the offset to the end of the string buffer. When we add the character to the string we add it at the index, then decrement the index. This is how I've decided to do the string prepending. It means I don't have to shift anything, but it also means the final string will always by 5 characters long, and any unused places are padded with spaces.

Line 50 jumps back to the start of the loop. Which will check the dividend to see if it's zero yet. The convenient thing here is that we don't need to explicitly move the result of the previous division into the dividend, because that happens automatically in the divid16 routine. Convenient.

Lastly, we want to output the finished string to the screen. This is done starting at line 53. We simply use the .X register to walk through the bytes of the string and call chrout on each byte to put them on the screen. The loop ends when the string's null byte is reached. And we return to BASIC. That's it.

So, we load it up in vice and low and behold, 43156 gets spat out onto the screen. Looks like it works! This concludes this two part series on INT to String and String to Int conversion. Hopefully someone in the world who's learning 6502 will one day stumble upon this and find it useful.

If you want to download the program and try it out, or inspect how it assembled, you can download it here.