NEWS, EDITORIALS, REFERENCE

Image Search and Conversion Service

Let's begin with a fun update on something I've been working on.

It's summertime, and I wanted my son to have a project. I've tried introducing him to programming, on the C64 in BASIC, but he's a bit young still to really grasp the important concepts. He loves games though; he likes card games like Munchkin and the Star Trek CCG, and he likes board games. We also play some dice games. There is a simplified version of a game called Mexico. You just need four ordinary 6-sided dice. One die each is used as your life counter, and starts at 6. You then roll one die each and compare their results. If they're the same no one takes damage this round, and you go on to the next round by rolling again. Whoever's die has the lower number takes one point of damage, which you track by rotating your life counter die. Whoever gets reduced to zero first loses. It's simple, but it's fun.

One day we decided to use a whiteboard and some markers to flesh out this Mexico-like game with more rules. We just started making the rules ad hoc, and if they weren't power balanced, we tweaked them. The game had weapons, and the ability to defend against attacks, and the ability to regenerate health. And hit points started at 100 (instead of 6). That was really fun! But it only lasted until someone erased the whiteboard, and now I can't remember the details of the rules we came up with.

Based on this, I came up with an idea for a simple game that can be designed on paper, that I would program in BASIC for the C64. My son is involved in making the maps, the level design. And I want to use the project to teach him about the parts that go into a program like this, with a fun and engaging end product. Essentially, there is a grid of rooms. Each room may have a door on any of its four sides that leads to an adjacent room. Like the dungeons in the original Legend of Zelda, only a much simpler implementation. Each room may optionally have one object.

The objects consist of:

- A key (one of 3 colors: red, green or blue)

- A monster (one of 2 difficulties)

- A potion (gives back a fixed number of hit points)

- A weapon (boosts your attack)

- A shield (boosts your defense)

- A treasure (the goal of the game)

Doors can be locked with a color matching one of the three key colors. The rooms are then connected together to form a maze. You can only carry one key of each color at a time.

If you enter a room with a monster, there are some rules for turn-based combat, based on two attributes: attack and defense, plus the random bonus from a D6 roll, plus the bonus from an item you've found (weapon or shield). There is also a mechanic for fleeing.

You navigate around the maze of interconnected rooms, find the keys to unlock more areas of the map, fight the monsters, find a weapon, a shield or both to improve your odds in combat against the monsters, and strategically use the potions to regenerate lost health. Ultimately you are looking for the treasure, in some room deep within the maze. Then you have to return to the start point with the treasure to solve the level. The level data is stored in external files, so the mazes and layout of items and monsters is fully customizable.

I've thrown in a simple points system. Fleeing a monster causes you to lose points, but you gain points for killing a monster. You also gain points for finding items. This was done because in playtesting (on paper,) Gabriel quickly figured out that it was safer to just always flee from the monsters rather than fight them. And if you're not going to fight them, why bother trying to find the weapon and shield?

I'll give more updates with the progress of the game as it develops. But, here are a couple of screenshots for now.

I've built out more of it since the description above was written. There is now a title screen with the story. Plus, rooms support secret doors that you can't see until you try to pass through them. You get points for discovering secret areas. Here's a little video I uploaded to Twitter showing my progress.

Another update on this little maze/RPG I’m doing in BASIC and PETSCII. #c64 I’ve done: Navigation, collecting items, the combat mode, level changing, locked doors, hidden areas, and I just added the intro/title screen. My son has been play testing!! :) pic.twitter.com/aAOfCEVWhI

— Gregorio Naçu (@gregnacu) July 23, 2021

Digital Ocean, RetroPixels

C64OS.com is hosted on Digital Ocean.1 But I had hosted the sub-site services.c64os.com just on an iMac in my house. I had it setup that way because I was just playing around with how it could work, and I had compiled a bunch of command line tools for Mac. At the time I didn't want to go through the hassle of getting it all working on Linux.

Last summer, that 10-year-old iMac left us for the great digital bitbucket in the sky. And along with it, it took all of services.c64os.com. I had a backup, but I was in a bit of a crunch period with getting my work environment set back up, and it was the middle of the pandemic and my kids were home with me, etc. Long story short, I have recently been rebuilding services.c64os.com on linux at Digital Ocean.

This originally included an image conversion webservice, which I blogged about very early on here, and then expounded upon here as I started to build it out.

You give it a URL to an image (GIF, PNG or JPG) and it gives you a ready-to-display C64 image file in either Koala (multi-color), Hires or FLI. To do the conversion, it uses NetPBM and the C64GFX Lib by Albert Pasi Ojala.2 These tools are tried and true, but they haven't seen an update in many years. More recently I discovered RetroPixels, a modern set of image conversion tools by Michael de Bree. I haven't put RetroPixels to use in the C64 OS services, yet, but I'll be playing around with those options soon. I've got RetroPixels compiled and working now, on the linux instance on Digital Ocean.

So, the image conversion service is working again. Try it out here. Paste in a URL to an image and hit "Convert."

Note: The filename must bear the extension of the image type: jpg, jpeg, gif or png.

Here are some examples:

https://www.lovethispic.com/uploaded_images/16142-Some-People.jpg

https://www.freepnglogos.com/uploads/bmw-brands-logo-image-1.png

https://animalia-life.com/data_images/monkey/monkey5.jpg

I am frankly still highly impressed with the realism you can get from a C64's resolution, color palette and color limitations, when converted from a photo.

DuckDuckGo Image Search

I already had the image conversion part working. The problem has always been, if you're sitting in front of your C64, how do you get the URL for an image to convert? I'd originally planned that the URL would come via an HTML or RSS conversion service.3 The HTML conversion service would form the backbone of a way to surf the web, and when you encountered an image its URL could be fed back to the image conversion service so you could view it. That's still a good plan and in the works. But in the shorter term, I thought it made sense to make an image search webservice.

I found a NodeJS project called ddg-image-search. Based around DuckDuckGo's image search engine, (which I think itself is built on Microsoft's BING image search, based on the URLs that come back.) So I made this into a service. It's very straightforward. You call the service and pass it a set of search keywords, and it returns you a block of results in plaintext. Every result is exactly 128 bytes for extremely easy parsing and memory management.

It's 64 bytes of a description followed by 64 bytes for a DuckDuckGo image URL (they all fit within 64 bytes.) If the description exceeds 63 bytes, it is truncated to 63 bytes plus the 1 byte string terminator. Either of these two fields that is less than 64 bytes is padded out with 0 bytes to the end. By default, you get 4 results, for exactly 512 bytes or 2 pages of memory (which can be allocated by the C64 OS memory page allocator.) There are options to vary the number of results, up to 200, and the offset into the results. So you could request 8 results starting at 0, then request 8 more starting at 8, etc.

You can thus make a search request using your C64, and then from those results, you can feed the image URL back to the image conversion webservice. And bingo, you can now use those two services in conjunction for a complete round-circuit, image search and then image fetch!

You have no idea how cool I find this. Using just your C64, and a wifi modem, you can now search for and fetch almost any image on the web. It means, you can use your C64 to find and consume modern content. For the first time, really, your C64 isn't limited to showing you just what someone else out there in the world has made available to the C64.

You can read about this service at: http://services.c64os.com/about#imagesearch

WiFi Modems

I tweeted out some photos of myself using my C128 to access this service, and got kudos because, you know what? It actually is pretty cool!

Using C64NET (ZiModem) Wifi Modem’s WGET program to test my new image search webservice, and then image fetch/conversion service. After some debugging effort, it works! #c64 I can now search for and fetch images with just the C64! pic.twitter.com/neqdifaieS

— Gregorio Naçu (@gregnacu) July 7, 2021

Yeah, this is the bomb. I can now freely search the web for any image, by keyword search, and then download C64-ready conversions of those images, all on my C64 with a WiFi modem. This just needs a UI now. #c64 pic.twitter.com/KbKEOMV6Jf

— Gregorio Naçu (@gregnacu) July 7, 2021

The inevitable question is, "Wow! That's cool, but, how can I do this?"

And here we come to how WiFi modems are so much cooler than just a modern way to access a BBS. BBSes are fun, and they're retro, and there is a nostalgia factor. I get it. And I've had a lot of fun playing around with BBSes too. But they only scratch the surface of what a WiFi modem will let you connect to.

A WiFi modem lets you open a TCP/IP socket. Any TCP/IP socket, to any server on any port. Once a TCP/IP socket is open, the data that goes back and forth across it is very similar to a simple one-to-one dialup modem connection. That's why they're so ideal for hosting modern BBSes. But if you open a socket connection to a webserver, you can speak the (remarkably simple) HTTP protocol over that two-way connection, and then read the result either into memory or straight to disk (or to an REU or whatever, if you want to get fancy.)

I'm a big proponent of the ZiModem firmware, because it's more advanced than most of the others. It allows for more than one socket connection at the same time. And multiple listening sockets, and packetized interleaving of data being read from those sockets, etc. But, let's just leave all that aside for now, because to speak HTTP and get a response, you only need a single out going socket which every WiFi modem can do.

Every modem has its own quirky command set, but usually the way to open a standard, streaming, interactive TCP/IP socket is based on the HAYES modem command to tell the modem to dial out. ATD or ATDT, and then the address and port, sometimes it has to be in quotes, sometimes not. Webservers run on port 80 (typically.) So here are some examples for connecting to a webserver:

atd"services.c64os.com:80" atdtservices.c64os.com:80

Both of the above do the same thing. The first command is the one used by ZiModem, the second one is used by "WIFI 64 modem adapter" which I ordered from Shareware Plus. You have to check the instructions or documentation for your specific modem to know the exact command syntax. This, by the way, is why in C64 OS I am designing drivers that abstract these command sets to a common programming interface.

Note that the address (services.c64os.com) is ONLY the domain name. It's not the "http:", because that is what tells a modern computer the protocol to use and directs it to port 80 by default. We've specified port 80 explicitly. And note that the opening connection doesn't include the path to the resource you want to get. It's just the TCP/IP socket connection, which a domain name (which gets looked up by DNS, so you could also supply an IP address directly), plus a port number, separated by a full-colon.

Speaking HTTP

Once the connection is established you have to "speak" HTTP, the HyperText Transfer Protocol. To do what we need it to do is very simple.

You send a GET command followed by at least one header line, followed by two linefeeds in a row to indicate that the request is complete. It replies with header rows, out of which we need to pull the content-size. Then it ends the headers with two linefeeds. And immediately following the second linefeed will come content that is precisely the size specified by the content-size header. That's the data, and you can either write it to disk, or, store it in memory if you know how much there is, and where it should be stored in memory.

For example, if you're making a request to the image conversion service, to convert a graphic to Koala format, the data it will give you back is exactly 10003 bytes. Structured like this:

| Size | Content |

|---|---|

| 2 bytes | Load Address ($6000) |

| 8000 bytes | Bitmap data |

| 1000 bytes | Color data |

| 1 byte | Background color |

Given that for this data type you know the size and where in memory it should be put, you could load it just as if it were coming from disk. Read the first 2 bytes off the data, use that to determine where to start storing the remaining bytes, and then that would start putting the bitmap data starting at $6000 and so on. Once the data is full loaded, you have a Koala image exactly in memory where a Koala image would be if you loaded it from disk.

First, the GET HTTP command. GET is the HTTP command that tells the server you want to retrieve some resource, and it also tells it that your request will consist only of headers. Because GET requests do not include any body content.

The GET command itself consists of the following "GET /path/to/resource/file HTTP/1.0" followed by a single newline. This alone might be enough on a very simple server, but on most servers it isn't enough because one server (one IP address on port 80) can virtual host multiple websites. The virtual hosting is done by serving up content out of different webroots depending on the domain name that is used. Now, you might think that because you opened the connection using a domain name (i.e. "services.c64os.com") that that's enough. It's not though. That domain name is used initially by your WiFi modem's TCP/IP stack to lookup the IP address for that domain via DNS (the domain name service.) Once it has the IP address, it uses the IP address to open the actual connection to the server.

Somehow the server, at the HTTP protocol layer, needs to know what domain was used to make this request. That is done by sending a "host" header, following the GET command. All headers consist of the header name, followed by a colon and a single space, followed by the header data all on one line, and ending with a single newline. Like this: "host: services.c64os.com" If you have more headers to include, you keep adding more header lines one after the next, always ending with a single newline character. When you have no more headers to send, you send a newline character on its own. In other words the request ends when two newline characters are retrieved in a row.

The full request then looks something like this:

(newlines shown just so you can see them.)

GET /image?if=jpg&u=https://www.c64os.com/resources/c64c-c64os-system.jpg HTTP/1.0\n host: services.c64os.com\n \n

Typical webservers won't leave a connection open for very long if the request is not made in a timely fashion. So if you open the connection, and try to type in the GET request manually, there is a good chance it will timeout in the middle of your typing, if you delay too long. It's much more reliable to have the request fully prepared, and stored in a variable ready to be sent as soon as the connection is established.

In response to the above, here's what services.c64os.com sends back:

(newlines shown just so you can see them.)

HTTP/1.1 200 OK\n Date: Wed, 14 Jul 2021 21:32:15 GMT\n Server: Apache/2.4.29 (Ubuntu)\n Content-Disposition: attachment; filename="c64c-system.koala"\n Content-Length: 10003\n Connection: close\n Content-Type: application/octet-stream\n \n

The response consists of the first line, which has the status code. 200 means OK. You might want to parse this out and confirm that it's 200. Following the status line come multiple headers. Each line ends with a single newline. Each header is structured just like the request headers. The header name first, followed by a colon and a single space, followed by the header data, up to the newline. The headers end with a newline on a blank line, or when two newlines are encountered in a row. And the data begins immediately after the second newline at the end of the headers.

How much data will there be though? That can be parsed out of the "Content-Length" header. You can see in the examples above, it is indeed 10003 bytes. After reading in the 10003 bytes of data, the connection is terminated automatically by the remote end. And you've got yourself a converted Koala image straight from a URL to a GIF, JPG or PNG on the web.

ZiModem and WGET

The problem is, how do you—practically speaking—make use of the information above?

If you could type fast enough, or dump the contents of a file at the modem (something Novaterm can do, for example), you could deliver the GET request and host header simply using a terminal. The problem is that the modem would then dump the results, the response headers and then also the Koala image data, into your terminal program. The binary data would get rendered to the screen, the screen buffer would overflow and most of the data would be uselessly displayed and then lost.

From a terminal, there doesn't seem to be a way to send the GET request, but then parse out the response headers, and capture the data after the headers to a file or to memory. You need a program that is specifically designed to handle the bytes, that come back from the socket connection, in the appropriate way. But, what does the appropriate way mean? That depends on the protocol being spoken over the socket. If you're going to open a socket to a webserver, you need a program that—at least partially—implements HTTP.

There is such a command line program that can be compiled for modern computers, wget. Wget stands for "web get" or "www get", it doesn't implement the full HTTP, just enough of it to execute a GET command, include some request headers, interpret the response headers, and save the response data out to a file. That's pretty much exactly what we need, to fetch a generic file. You'd need something more specialized if you wanted to use it specifically as a way to fetch images directly to memory, but leave that aside. Making a get request and saving to a file would be plenty to get started playing around with webservices.

It so happens that the C64 NET WiFi modem comes with a set of programs ready to be used. Among them are FTP, IRC, and... WGET!

WGET for C64 Net (ZiModem, User Port)

WGET for C64 Net modem is really easy to use. Once you've got the modem connected to your hotspot (which you can do using the include config program,) you load and run WGET. It has a default URL pre-filled in, and a default output filename. You press the numbers to edit the fields. You can push 1 and then type in a new URL, like this:

http://services.c64os.com/image?if=jpg&u=https://www.c64os.com/resources/c64c-c64os-system.jpg

WGET does the job of specifying port 80, and pulling out the domain and sending that as a host header. You can press 4 to add additional or custom headers. Push 3 to change the filename. By preceding the filename with the Commodore DOS @0: you will overwrite any existing file with that name in the current partition. Ending the filename with ,p or ,s will inform the DOS that the new file should be created as either PRG or SEQ type respectively.

Gotchas and things to overcome

Transfer-Encoding: chunked

First, WGET is not perfect. I discovered in testing/debugging that WGET does not support "chunked responses." Typically, when you request a file from a server, the server reads the file size from disk, puts that file size into the Content-Length header, and then spits out the entire contents of the file in one continuous chunk of body data. The problem is that often HTTP GET is used to retrieve some dynamically generated content. Often the full size of that content cannot (fundamentally) be known, perhaps because it is a stream. It could be a never ending stream.

To deal with this, HTTP has a "chunked" response format. Essentially, the primary header doesn't specify a single fixed length for the content, but instead includes a "Transfer-Encoding: chunked" header.4 When the Transfer Encoding is chunked, the server waits till it has a bit of data, whose length it does know. Then it sends the size of that data as a number (in ASCII) followed by Carriage Return Newline (CRLF or \r\n). Immediately following that newline comes the number of bytes specified. But that's not the end of the data, that's just the end of one chunk. As soon as you've read in one chunk, you're back to a mode waiting for the next chunk size. The data keeps coming chunk size followed by chunk data, over and over. Until there is no more data, at which point the server sends a \r\n alone, and closes the connection.

It shouldn't be too tricky to support, but WGET (at the time of this writing) doesn't support it. And since the services.c64os.com image conversion webservice dynamically generates the output with PHP, it was outputting in chunked format by default. I made a special exception to override PHP's inclination to do this. I buffer the image output, then send out a proper Content-Length header manually, and then dump the converted image data buffer in one go. But, if you use WGET to try to fetch any old random content from the web, you will sooner or later bump into this shortcoming.

User Port Modems

Some modems connect to the C64's user port, but some—the faster ones—use a SwiftLink or Turbo232 or one of their modern clones. A terminal program, like DesTerm or Novaterm or others, can access a modem via a highspeed RS232 cartridge because they have special drivers do to so.

RS232 over the user port, though painfully slow, has something special: Native support by the C64's KERNAL ROM. With a user port modem, you can open a connection to it using BASIC and the open command with device #2 (RS232 on the user port.) WGET, along with all the other C64 NET programs (FTP, IRC, etc.) are written in BASIC!! They have a special assembly component for speeding up transfers, but they ultimately depend on the KERNAL's RS232 device.

Aside: Had a conversation with Bo ZimmermanI was a bit confused on this point, and didn't want to convey incorrect information. So I wrote Bo Zimmerman (ZiModem author) to ask about using WGET and/or the FTP program from the C64 Net disk with a Link232-Wifi modem. Or a GuruTerm modem connected to a Turbo232. Apparently there is an option to assemble a SwiftLink driver, which when loaded in wedges the KERNAL so that when BASIC opens a connection to device 2, it can actually talk to a modem via a SwiftLink.

That's very cool! But it still doesn't fully solve the problem. As, apparently WGET makes some direct calls to the CIA that controls the User Port. There is also an issue getting the SwiftLink Driver and some of the other assembled components to work together, as they are assembled to the same places in high memory. And memory is quite constrained up there.

The long story short is that WGET is only usable, in its present implementation, with a User Port modem that is running the ZiModem firmware. If you don't have one of those, WGET can't be used for playing around with the services, and you'd have to either write your own version of WGET, or wait until C64 OS gets released and has support for HTTP over a wider range of WiFi modems.

Thoughts About Networking

I've sat on this post for a while, without knowing how to finish it.

I found that Bo Zimmerman's GitHub account has the source code for all things ZiModem. The modem firmware itself, the assembly components that can be used with BASIC to speed up and more reliably interact with the modem, the up9600 driver, and the small suite of useful BASIC programs.

I've been reading the source code to PML64. (That's Packet ML(machine language) the C64 version.) It's interesting. It demonstrates how, in 6502 asm, to read the ZiModem packet header, extract and convert from number strings to ints the connection ID, packet data size and CRC8. It also shows then how to read the packet data, upto the size specified by the packet header, and compute the CRC8 on the packet data.

That all seems sufficiently straightforward to me. PML64 is a component for helping BASIC to work faster, it explicitly seeks out a BASIC string variable "p$" and manipulates it to point to a buffer somewhere from $C000 to $CFFF. PML64 itself really cannot be used by C64 OS, but it is useful to see how he's implemented it.

Next I've been reading the BASIC source code to his WGET program. And, here's where things really start to go off the rails for me. It's far more complicated than I had anticipated. It's not that I expect networking code to be simple, I know it's complicated. But the actual integration of WGET with the AT-Hayes commands that are custom to ZiModem's firmware are way more closely coupled than I want them to be. The WGET program, like most Commodore 64 programs, assumes that it owns the whole machine, and that it owns the whole modem. Which, technically it does, so there is nothing wrong with what it's doing. But in the context of an operating system, no single program should have its fingers that deeply into the inner workings of the modem.

The first thing it tries to do is issue AT commands to change the modem's configuration, then checks its firmware version number so it can later branch, to support some features only available in version 3.

It has special software flow control features that sends an XOFF immediately after a packet has arrived. So that you can parse out and save the packet data at your leisure. Then when you send an XON it immediately gives you one more packet and goes back to XOFF automatically. It discards packets that don't match the one and only one connection ID you've opened. If it finds data that doesn't match a packet at all, it loops blindly and madly over all the data coming in and /dev/null's it, waiting to get back to where it can issue another command. The commands it issues include codes for temporarily changing the baud rate(??!) for just this one connection. And has mask bytes, and delimiter bytes, and line ending modifications and PETSCII translation... all part of the AT command set, and mostly all custom to ZiModem.

My head is basically spinning. I need to figure out how to separate the layers, abstracting each component into something with a simple, reusable interface to the other layers.

My goal is simplicity of the API for an app developer. I want it to feel as easy as socket programming on a modern computer. Request an open connection to a host, get back a connection ID (or an error code). Write bytes to the connection ID, read bytes back from the connection ID. And if more than one connection is open, the driver layer needs to handle dividing the incoming packets into their respective buffers. Each open connection will get its own buffers. Actually, maybe not their own buffers. But, if an application opens a connection and gets an ID, when packet data comes in on that ID, perhaps it needs to generate an event that gets distributed in much the same way that other events are distributed. So the right process gets notified that data is waiting on its connection. And as the application reads the data, it can't merely be reading an arbitrary quantity of data from the modem buffer. It can't, for example, overread and get data that doesn't belong to it. There is so much to think about to figure out how this should work.

Error correction, retries, etc, have to be done at a level that is transparent to the application. Unless something is unresolvable, the app shouldn't have to know about it.

Protocols on top of sockets will be implemented completely separately. The apps will either know about the protocols because they are implemented directly, or the protocols will be implemented by libraries that the app calls into. Things like PETSCII translation will have to be done at the protocol layer, not at the modem transmission layer. Because, some modems don't support commands for PETSCII translation, and besides which, some protocols (if they are C64-to-C64 protocols) don't need PETSCII-to-ASCII translation (or vice versa).

HTTP is essential just for accessing webservices from services.c64os.com for getting proxied, preconverted content. But I have been working on other much simpler protocols for chat/instant-messaging, turn-based game state, etc. The simpler (non-http) protocols could be used over a regular dialup modem or even a null modem connection between two C64s.

If the modem is ZiModem, well, it's a lot more complicated, but it's a lot more powerful too, because multiple connections can be opened simultaneously. If it's a non-ZiModem, and you try to open a socket, it will have to open a single streaming connection, and any subsequent requests to open a connection will get an error that the total number of sockets is saturated. This could happen with ZiModem too, I'm just not sure how many connections it supports. With a single streaming connection on a non-ZiModem, there is no CRC checking at all, as there are no "packets."

The way that WGET works, there is no possible way to port it to work on non-ZiModems. I suppose the CRC checking and flow stuff could migrated to a ZiModem driver.

It looks like it's going to take some serious work to get the C64 OS Networking working the way I want, and working reliably.

- Great service! Much easier to maintain and setup than Amazon's web services, which is more of an enterprise level service. If you want to try Digital Ocean, you can also give me a little kickback by using this referral link.

- The C source code to which you can find here.

- Again, that's what was discussed in the weblog post, Webservices: HTML and Image Conversion.

- You can read about this in my more detail in Transfer-Encoding of the MDN Web Docs.

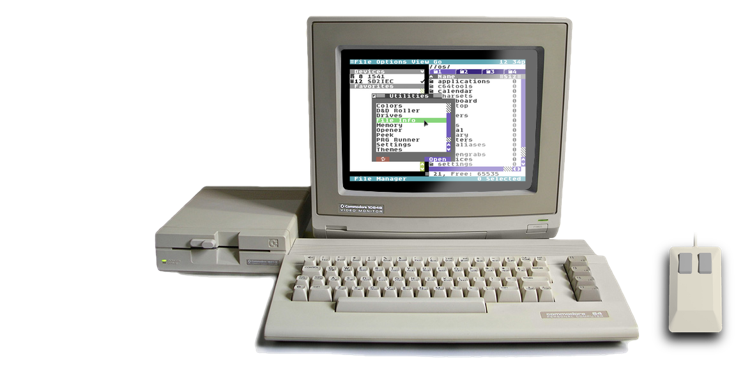

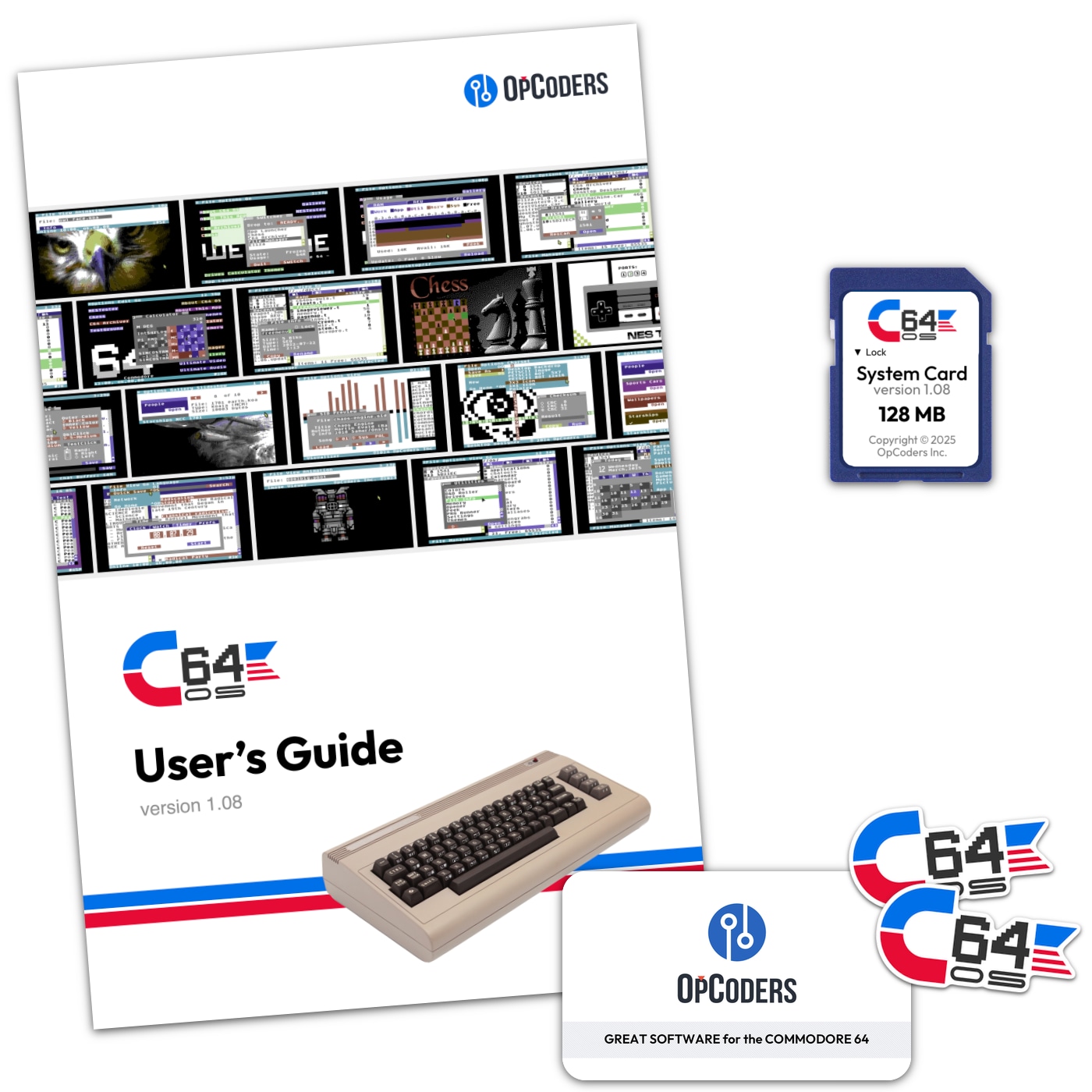

Do you like what you see?

You've just read one of my high-quality, long-form, weblog posts, for free! First, thank you for your interest, it makes producing this content feel worthwhile. I love to hear your input and feedback in the forums below. And I do my best to answer every question.

I'm creating C64 OS and documenting my progress along the way, to give something to you and contribute to the Commodore community. Please consider purchasing one of the items I am currently offering or making a small donation, to help me continue to bring you updates, in-depth technical discussions and programming reference. Your generous support is greatly appreciated.

Greg Naçu — C64OS.com